Creating a compliance-ready Document AI system means embedding regulatory requirements into every step of its design. Whether you’re working in healthcare, finance, or other regulated industries, you need to address strict rules like HIPAA, GDPR, and SOC 2. Here’s what you need to focus on:

- Data Management: Implement retention and deletion policies to minimize storage of sensitive data. Use tools like redaction layers to filter out PII before processing.

- Audit Logging: Maintain tamper-proof, structured logs that track every event, from data ingestion to output, ensuring you can reconstruct actions months later.

- Access Control: Use role-based and attribute-based controls to limit who can access sensitive data, ensuring permissions align with compliance standards.

- Model Governance: Document training data, monitor model outputs for accuracy, and ensure explainability through structured logs and rationales.

- Vendor Compliance: Only work with third-party providers who meet regulatory requirements and have signed agreements like BAAs or DPAs.

Auditors will scrutinize your system’s data flows, logging, and governance. By designing with compliance in mind, you can avoid costly retrofits and ensure your system passes audits without issues.

114. AI Legal & Regulatory Portal Demo | Audit Compliance 10X Faster with This AI Legal Portal

sbb-itb-51b9a02

Compliance Requirements for Document AI Systems

Document AI systems operating in regulated industries must adhere to a variety of regulations, each imposing its own technical demands.

Key Regulations and Standards

Understanding the key standards your system needs to comply with is a crucial first step:

HIPAA governs the handling of electronic Protected Health Information (ePHI). To comply, systems must implement encryption, access controls, and tamper-evident audit logs retained for at least six years, as specified in the Security Rule (§ 164.302–318) [2][11]. Additionally, any third-party services – such as LLM APIs, vector databases, or logging tools – must have a signed Business Associate Agreement (BAA) before processing ePHI. These requirements directly influence how you design data pipelines and select vendors.

SOC 2 evaluates systems based on five principles: Security, Availability, Processing Integrity, Confidentiality, and Privacy. For AI systems, meeting the Processing Integrity requirement can be particularly challenging. You need to define what constitutes "accurate" output for a probabilistic model and demonstrate this accuracy through monitoring data [12]. This calls for integrating measurable output validation into the system’s design.

CCPA/CPRA applies to systems processing personal data of California residents. These regulations demand data minimization and clear policies for collecting, storing, and deleting Personally Identifiable Information (PII). As a result, these rules affect your data ingestion processes and retention schedules.

NIST AI RMF provides a framework for AI risk management, structured around four core functions: Govern, Map, Measure, and Manage. It emphasizes explainability, bias mitigation, and reliability [12][13]. Each function translates into specific engineering controls that your system must implement.

FedRAMP Moderate mandates compliance with 323 security controls based on NIST SP 800-53, while FedRAMP High requires 410 controls [13]. The number of controls depends on the sensitivity of the data your system handles, which directly impacts the depth and complexity of your security measures.

"A probabilistic system cannot guarantee deterministic outputs. Auditors need to understand how you define and measure ‘correct’ processing for a non-deterministic system." – BeyondScale Team [12]

What Auditors Look For

Auditors are increasingly focused on verifying that controls are actively implemented, rather than simply reviewing documented policies. They look for evidence such as logs, training records, configuration screenshots, and signed agreements to confirm compliance in production environments [16].

Key areas of focus include:

- Model versioning: Auditors expect a model registry (e.g., MLflow, SageMaker) that assigns unique identifiers to models and links them to specific training datasets [12].

- Customer data usage: They examine both written data usage policies and technical configurations that enforce these policies by default [1][12].

- Incident response: Auditors want to see documented, AI-specific playbooks that outline steps for addressing incorrect or harmful outputs [12][13].

When it comes to data management, auditors also check for clear retention schedules, evidence that PII is redacted or tokenized before reaching the LLM, and "break-glass" procedures for emergency access. These procedures must trigger high-priority audit events and notify privacy officers immediately [2]. These requirements heavily influence how data flows and system logs are designed.

It’s worth noting that integrating accountability controls during the system architecture phase can save significant costs – up to four to six times less than retrofitting them after development [17]. These audit expectations play a critical role in shaping compliant data flows and robust logging systems, which are explored further in subsequent sections.

Designing Compliant Data Flows

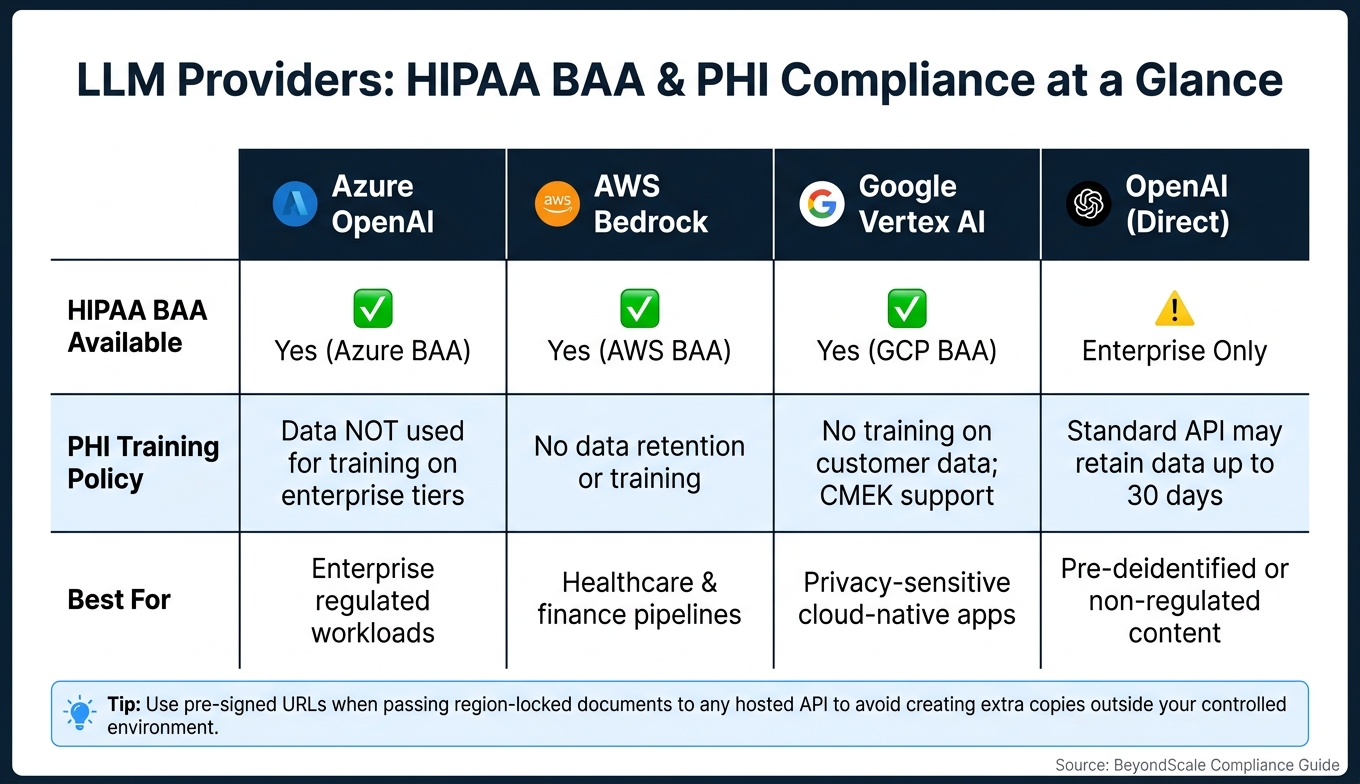

LLM Providers: HIPAA BAA & PHI Compliance Comparison

To ensure compliance and avoid audit failures, it’s critical to map every step of your data’s journey – from ingestion to error handling, observability, and training. Missing even a single step in this process is a common reason audits fail [15]. Alongside this, enforce strict policies for data retention and secure deletion to maintain a compliant data flow.

Data Retention and Deletion Policies

Keeping data for the shortest necessary period is one of the safest strategies. For example, reducing default retention from 365 days to just 30 days can lower the amount of sensitive data stored by 80% to 95% [21]. Temporary artifacts should be deleted immediately using tools like S3 lifecycle policies [19][21].

For event-based deletion triggers, such as CCPA requests, use idempotent API workflows. This ensures that repeated deletion requests yield the same result without duplicating log entries, keeping your audit trail clean [8]. These workflows must cascade across all storage locations the data has touched – this includes primary databases, vector indexes, caches, and any derived outputs.

Additionally, limit the exposure of your data to external systems wherever possible.

Reducing External Data Exposure

Before sending documents to external LLM APIs, route them through an internal redaction layer. At this stage, OCR and PII detection tools can scan the data, replacing sensitive elements with opaque tokens (e.g., "John Doe" becomes [PATIENT_001]). The actual PII is stored securely in an encrypted token vault, ensuring that the LLM only processes synthetic data [2][10].

For deployment, a tiered model architecture can significantly reduce your exposure. Use locally deployed open-weight models like Llama 3 or Mistral for sensitive or regulated data, while reserving hosted APIs for pre-deidentified content [20]. When using hosted APIs, choose providers that offer signed Business Associate Agreements (BAAs) and region-specific endpoints to comply with data residency requirements. Here’s a quick comparison of major providers:

| LLM Provider | HIPAA BAA Available | PHI Handling Policy |

|---|---|---|

| Azure OpenAI | Yes (Azure BAA) | Data not used for training on enterprise tiers [15] |

| AWS Bedrock | Yes (AWS BAA) | No data retention or training on Bedrock [15] |

| Google Vertex AI | Yes (GCP BAA) | No training on customer data; CMEK support [15] |

| OpenAI (Direct) | Enterprise only | Standard API may retain data for 30 days [15] |

One practical tip: If your documents are stored in region-locked storage, use pre-signed URLs to pass them to the processing API. This avoids creating extra copies of the document outside your controlled environment [18].

Proving Deletion and Data Minimization

Auditors need clear, verifiable evidence of data deletion and minimization. Every deletion event should include a structured log entry detailing the who, what, when, and why [10][5]. Adding a concise reason code, such as "user_deletion_request_ccpa", can provide additional clarity.

For the most reliable proof of deletion, consider crypto-shredding. This involves encrypting data at rest with customer-managed keys (CMKs) and deleting the key when the data needs to be purged. Without the key, the encrypted data becomes permanently inaccessible, and the key deletion event itself serves as a clear, verifiable record [15]. Pair this approach with WORM storage (like S3 Object Lock) for audit logs to create an immutable evidence trail.

"If your auditor cannot reconstruct exactly what the model saw, and exactly what was returned, six months after the call, you do not have an audit log. You have a debug stream." – Veklom Field Notes [2]

To avoid accidental PII leakage, apply logging filters to redact sensitive information in outbound logs. This prevents developers from unintentionally writing raw user input into tools like Sentry or Datadog – an increasingly common focus for auditors [6]. Together, these practices create a secure and compliant data flow that meets the rigorous demands of audits and regulations.

Audit Logging and Traceability

When it comes to compliance audits, having a reliable data flow is only part of the equation. What truly matters is maintaining a verifiable record of every data-related event. Compliance auditors don’t just want to see that your system functions – they need to reconstruct every decision, access event, and model activity from a clear, tamper-proof log. This goes far beyond the scope of standard application logging.

"A conversation log is a debug tool. A compliance audit log records governance events: content policy matches, PII detections, security intercepts, and model access decisions." – Luke S Suneja, Client Partner, Sphere Partners [22]

What to Log in a Document AI System

One common mistake is treating audit trails like debug logs. They serve entirely different purposes. Debug logs are often noisy, sampled, and temporary. Audit trails, on the other hand, need to be structured, durable, and complete.

For a document AI system, you should log the following event categories, each with its own structured entry:

| Event Category | What to Capture | Why It Matters |

|---|---|---|

| Ingestion | Source ID, cryptographic hash, intake timestamp | Establishes chain of custody |

| Extraction | Model version, schema version, confidence scores | Proves how data was derived |

| Governance | PII detection events, policy matches, threat intercepts | Demonstrates enforcement of safety controls |

| Human Review | Reviewer ID, original vs. corrected value, reason code | Documents human oversight decisions |

| Output | Generation template, delivery destination, status | Tracks where data went and in what format |

| System | Rate limit enforcements, access denials, token counts | Monitors misuse and unauthorized access |

When logging Personally Identifiable Information (PII) detection events, avoid storing raw data. Instead, include a reason code (e.g., "claims_adjudication" or "pii_block") to explain policy decisions in terms that even non-technical auditors can follow without needing a developer [5][26].

Correlation IDs are also essential. These IDs link actions across the entire system – frontend, API gateway, OCR pipeline, retrieval layers, and model runners. Without them, piecing together a multi-step document workflow becomes nearly impossible [5][26].

By implementing these practices, you create a solid foundation for tamper-proof records, which are critical for both internal monitoring and external audit readiness.

Making Logs Tamper-Evident

Simply writing logs isn’t enough – auditors need assurance that the logs haven’t been altered. The best way to achieve this is through SHA-256 hash chaining, where each log entry includes a hash of the previous one. If even one record is tampered with, the entire chain breaks, making the alteration immediately detectable [27][24].

For added security, combine hash chaining with WORM (Write Once Read Many) storage. This ensures that once logs are written, they cannot be modified or deleted until the retention period ends [23][25]. To ensure accurate timestamps, synchronize all system clocks using NTP and log events in UTC with millisecond precision. Even minor clock drift can disrupt the ability to reconstruct the sequence of events [27].

To keep logs efficient while still traceable, store a content-addressable hash of retrieved documents or prompts instead of the full text. The full content can be stored separately in a versioned, immutable registry. This approach keeps logs lean but still allows forensic reconstruction when needed [27].

Retention policies depend on regulations. For example:

- FINRA Rule 4511: Requires six years for books and records, including AI-assisted communications.

- DORA Article 17: Mandates a five-year trail for ICT-related incidents.

- EU AI Act Article 12: Sets a minimum six-month retention for high-risk AI system logs [22].

Design your retention strategy around the strictest applicable regulation for your industry.

Connecting Logs to Monitoring Tools

Audit logs should integrate with a SIEM (Security Information and Event Management) system for real-time alerts and anomaly detection. However, instead of feeding raw logs directly into the SIEM, mirror a safe, PII-free subset of your compliance event stream [5].

Adopt a tiered storage model for efficiency:

- Hot storage: Fully indexed logs for recent events (30–90 days).

- Warm storage: Searchable logs for older data.

- Cold WORM archives: Long-term retention for compliance-critical logs [27].

To prevent latency in inference calls, write logs synchronously to a local durable buffer, then replicate them asynchronously to your central logging system.

Use your logs to track governance KPIs, such as document lineage completeness, weekly policy exceptions, and access denial rates. These metrics provide insights into whether your controls are functioning as intended. They’re also the exact metrics auditors will want to review [23].

"If an auditor can’t independently verify a document’s timeline from your export package, your trail is not audit-ready yet." – OCRbit [25]

Protecting Sensitive Data: PII Detection and Access Control

When it comes to safeguarding sensitive information, it’s not enough to rely on good audit logs. While they can tell you what happened, the key is to have controls in place that determine what should happen before sensitive data ever reaches your systems. Logging a data leak after the fact isn’t compliance – it’s damage control.

PII Detection and Redaction Before LLM Processing

Sensitive data, like Personally Identifiable Information (PII), must be filtered out before it ever interacts with a large language model (LLM). One effective way to do this is by enforcing PII redaction at the API gateway. Running this process in a secure environment, such as a dedicated VPC or an air-gapped system, reduces risks and keeps you in compliance with regulations.

"A PII redaction policy written in a company wiki is not a control. It is a liability waiting to surface in your next HIPAA or GDPR audit." – Daniel Whitenack, Prediction Guard [28]

A combined approach to PII detection works best. Pairing rule-based methods (like regex patterns for Social Security numbers or birth dates) with contextual NLP analysis consistently delivers better results, especially for unstructured data. For example, NLP-based tools can identify and redact Protected Health Information (PHI) from clinical text with a recall rate of 90–98% [15].

When dealing with HIPAA compliance, organizations typically choose one of two methods:

- Safe Harbor: This involves mechanically removing 18 specific identifiers, such as names, detailed geographic information, and most date elements.

- Expert Determination: Here, a statistician certifies that the likelihood of re-identifying individuals is extremely low.

The Safe Harbor method is easier to audit, while Expert Determination allows for more flexibility when certain data points are necessary for analysis [28][2][11].

For added security, tokenization can be applied to sensitive fields, further reducing risks.

Role-Based Access Control for Documents

Not everyone in your organization needs the same level of access to sensitive documents. For instance, a clinician reviewing patient records has different access needs than an engineer analyzing model outputs. Your access control system must reflect these distinctions clearly.

A layered approach combining Role-Based Access Control (RBAC) with Attribute-Based Access Control (ABAC) can effectively manage these differences. RBAC handles broad permissions across the organization, while ABAC fine-tunes access based on specific conditions like document classification, user intent, or even location [11][29].

Key practices to strengthen access control include:

- Attaching persistent metadata tags to documents at the time of ingestion.

- Using short-lived, purpose-specific JWTs for access.

- Requiring multi-party approval for changes to sensitive data access.

Special attention is also needed for AI agents. Instead of using shared API keys, these agents should inherit the verified identity of the user initiating the action through OAuth JWT or OIDC claims. This ensures every action is traceable back to an authenticated individual [30][11].

These measures work hand-in-hand with other efforts to limit unnecessary exposure of sensitive data.

Limiting Data Exposure During Processing

To minimize risk, follow the "minimum necessary" principle: retrieve only the specific sections of a document required for the task at hand. This approach aligns with GDPR’s data minimization requirements and helps prevent overexposure of sensitive information.

One often overlooked vulnerability lies in observability pipelines. Raw prompts containing PII or PHI can unintentionally end up in third-party monitoring tools, analytics platforms, or debug logs. This creates a hidden repository of sensitive data. In early-stage AI SaaS setups, it’s estimated that 30–50% of stored PII ends up outside the primary database [21]. To address this, implement a dedicated PII-scrubbing layer between your AI agent and logging infrastructure [11].

Model Governance and Explainability

Strong audit trails are only part of the equation. Effective model governance ensures that both training data and AI outputs meet compliance standards and remain explainable.

Training Data Governance

Establishing clear policies for how training data is handled is essential. Start by removing any personally identifiable information (PII), intellectual property, or business secrets before using the data for training. When real data poses risks, synthetic data can step in to maintain statistical patterns without exposing sensitive information.

Maintain an audit packet for every training dataset. This packet should include details like source IDs, timestamps, content hashes, and records of transformations applied.

"Audit-ready means you can reconstruct and defend the full chain from acquisition to output." – Promise Legal [8]

Diversity in training data is also critical to reduce bias, as required by many regulations. For instance, the EU AI Act mandates that high-risk AI systems document their training data sources, preprocessing steps, and quality assurance measures to address potential bias risks [31].

Explainability for AI-Generated Outputs

Explainability can be approached in three main ways:

- Use models that are naturally interpretable.

- Apply post-hoc feature attribution tools like SHAP or LIME.

- Generate structured outputs that include concise rationales and source citations [17].

These methods work hand-in-hand with data handling controls and audit logging to ensure transparency for both internal teams and external regulators.

"An 800-dimensional SHAP vector per decision is not an explanation. A three-sentence rationale with the top five feature contributions and a link to the source records is." – Bo Peng, Precision Federal [17]

Every AI decision log should include essential details: the timestamp, input (or its hash), model ID and version, prompt template, output, and any human overrides [17][26]. For high-stakes applications such as financial transactions, medical diagnostics, or legal rulings, confidence threshold tiers are a must. Low-confidence outputs should be flagged for human review instead of forcing potentially flawed decisions through the system.

Building explainability into your architecture from the start is far more cost-effective than retrofitting it later – by a factor of 4 to 6 [17].

Monitoring Model Performance and Bias

Continuous monitoring is key to catching drift and unintended bias. Over time, models can degrade as factors like document formats, language, or data distributions evolve. A model that performs well at launch may later falter in ways that only become evident during an audit.

Track more than just accuracy. Metrics like these are crucial:

- Redaction completeness: Ensures all sensitive data is properly masked.

- Clause classification accuracy: Measures how well the model identifies specific legal or regulatory sections.

- HITL (human-in-the-loop) turnaround time: Tracks whether human reviews are keeping up with AI outputs [33][7].

For hiring or lending decisions, laws like New York City’s AEDT require a disparate-impact ratio to formally assess bias across protected groups [32].

When a human reviewer corrects an AI-generated output, log both the original AI value and the human-corrected value. This "before-and-after" record demonstrates active quality control and creates a defensible chain of custody [7]. Periodic audit drills are also essential – ensure your system can produce complete provenance records, including hashes and reviewer sign-offs, within 30 minutes [32].

Preparing for a Compliance Audit

Documentation and Evidence Packages

When an auditor – whether internal or external – shows up, having all necessary evidence ready is just as important as having robust controls in place. A good rule of thumb: your team should be able to produce an AI system inventory, relevant log segments, vendor lists, and approval records within 30 minutes [32].

Auditors generally look for a core set of artifacts:

| Artifact | What It Proves | Key Fields |

|---|---|---|

| Model Card | System intent and governance | Version, training scope, metrics, known risks, approvals [4] |

| Data Lineage Record | Chain of custody from ingestion to output | Source system, transformation path, access controls [4] |

| Audit Packet | Defensibility of a specific work product | Source URL, retrieval timestamp, content hash, reviewer sign-off [8] |

| Release Register | History of system changes | Change summary, approver, validation evidence, risk rating [3] |

| Access Control Registry | Who can see what and why | RBAC policies, BAAs, DPAs for third-party vendors [14][32] |

To streamline this process, integrate evidence bundling into your CI/CD pipeline. Automate the generation of model cards, validation results, and lineage summaries with every deployment [4]. This ensures your documentation stays current and ready for audit requests.

"If a decision cannot be reconstructed from logs, lineage, and versioned artifacts within one business day, you do not yet have a true audit trail – you have observability with hope attached." – Beek.cloud [4]

These artifacts are critical for connecting your internal controls to external compliance standards.

Mapping Controls to Compliance Frameworks

Once your documentation is in place, make sure every control aligns with the relevant compliance frameworks. Auditors expect your system’s functionality to meet recognized standards. For many sectors, NIST AI RMF and ISO/IEC 42001 are common benchmarks for AI governance. In healthcare, systems handling PHI must comply with HIPAA and often aim for HITRUST certification. Financial services teams face requirements from SEC, FINRA, and PCI DSS, while life sciences teams working on document AI systems for clinical or manufacturing workflows need to meet FDA 21 CFR Part 11 standards for electronic records [36][37].

State-level regulations are also evolving. NYC Local Law 144 mandates independent bias audits for automated hiring tools, Illinois House Bill 3773 requires disclosures for AI use in recruitment, and California’s CPRA expands governance over automated decision-making [38]. Proactively mapping your controls to these frameworks simplifies evidence collection and helps reviewers quickly identify compliance coverage and any gaps.

Running Internal Audits

The best way to avoid surprises in an external audit is to conduct thorough internal audits. These audits should validate your controls on an ongoing basis, following the logging and data flow practices discussed earlier. Organize reviews by risk level – monthly for high-risk outputs like automated financial or clinical decisions, and quarterly for lower-risk systems [34]. Each review should trace a document’s lifecycle, from ingestion to archive, including simulated access requests and deletion events.

Beyond accuracy checks, monitor metrics such as retention SLA compliance, the percentage of records with complete audit trails, and how quickly expired artifacts are deleted [7]. With only 23% of IT leaders confident in their organization’s ability to manage AI governance effectively [34], these internal reviews are vital for identifying and addressing vulnerabilities before an external audit.

"If a compliance requirement cannot be enforced in code, tested in CI, and observed in production logs, it is not ready for a production AI system." – UCAFS [35]

Conclusion: Next Steps for Compliance-Ready Document AI

Now that you’ve explored the strategies outlined in this guide, it’s time to focus on putting them into action. Compliance isn’t just about individual controls like PII detection or audit logging – it’s about creating a system where all parts work together seamlessly. Skipping even one piece could leave you exposed to non-compliance risks.

Start small with minimum viable governance: implement basic data classification, logging, and human approval checkpoints. Once those are in place, you can gradually add more advanced controls as your system evolves.

"Fast governance that exists beats perfect governance that never ships." – Docsigned [9]

From there, tackle a clear checklist to ensure your system is robust. For example:

- Map out your top 10 data sources, ranked by sensitivity.

- Confirm that every third-party vendor handling sensitive data has a signed BAA or DPA in place before their API is used.

- Schedule regular audit drills to ensure your team can quickly produce key artifacts like an AI inventory, log slice, subprocessor list, and governing policies [32].

These steps will help uncover gaps that could become issues during external reviews.

Finally, approach every design choice with the mindset that it must withstand scrutiny. If a data flow, retention rule, or access policy can’t be explained clearly to a non-technical auditor, take the time to simplify or document it thoroughly before moving into production. For detailed guidance on implementing specific controls, refer back to earlier sections of this guide.

FAQs

What’s the minimum audit trail required for an LLM document pipeline?

The minimum audit trail for a large language model (LLM) document pipeline should maintain a durable, source-to-output record that tracks essential details. This includes:

- Source document ID and intake timestamp: To identify and timestamp when a document enters the pipeline.

- Processing steps and corrections: A record of all transformations and edits applied to the document.

- Access logs: Documentation of who accessed both the documents and their outputs.

- PII detection and redaction actions: Tracking any actions taken to identify and redact personally identifiable information.

- Data retention and deletion policies: Clear records of how long data is stored and when it is removed.

- Tamper-evident logs: Logs designed to ensure the integrity of the pipeline by detecting unauthorized changes.

This approach supports compliance, ensures accountability, and provides traceability across the entire document pipeline.

How can we prove PII/PHI never reached the LLM or our monitoring tools?

To ensure that sensitive information like Personally Identifiable Information (PII) or Protected Health Information (PHI) never reaches a Large Language Model (LLM) or monitoring tools, it’s crucial to implement multiple protective measures at data entry points. Here’s how:

- Detection and Redaction: Use automated tools to identify and redact sensitive data before it interacts with the LLM. This ensures that critical information is filtered out early in the process.

- Tokenization and Access Controls: Enforce tokenization and apply strict access controls to limit who can interact with sensitive data. This adds an additional layer of security.

- Tamper-Evident Audit Logs: Maintain logs that are tamper-proof to track data handling. These logs provide transparency and help meet compliance requirements.

- Boundary Verification: Monitoring tools should actively verify that sensitive information is excluded from logs, prompts, or any training data. This step is essential for ensuring compliance and safeguarding privacy.

These practices not only protect sensitive data but also provide the necessary evidence for compliance audits, reinforcing trust and accountability in data handling processes.

How do we run vendor risk reviews for LLM APIs and subprocessors?

To conduct vendor risk reviews for LLM APIs and subprocessors, focus on a few key areas:

- Security controls: Examine the measures they have in place to protect data and prevent unauthorized access.

- Data retention and deletion policies: Understand how they store data, how long they keep it, and how they handle deletion.

- Compliance certifications: Verify their adherence to relevant standards like SOC 2, ISO 27001, or GDPR.

Also, evaluate how they manage training data and use structured questionnaires to assess their risk management practices. Regular security reviews and compliance checks are essential to ensure ongoing monitoring. This approach helps reduce risks and keeps you aligned with compliance requirements when working with LLM APIs and subprocessors.

Leave a Reply