Phi-4 vs Gemma 3 vs Mistral Small: A Practical Benchmark for the Enterprise Use Cases That Actually Matter

Huzefa Motiwala May 1, 2026

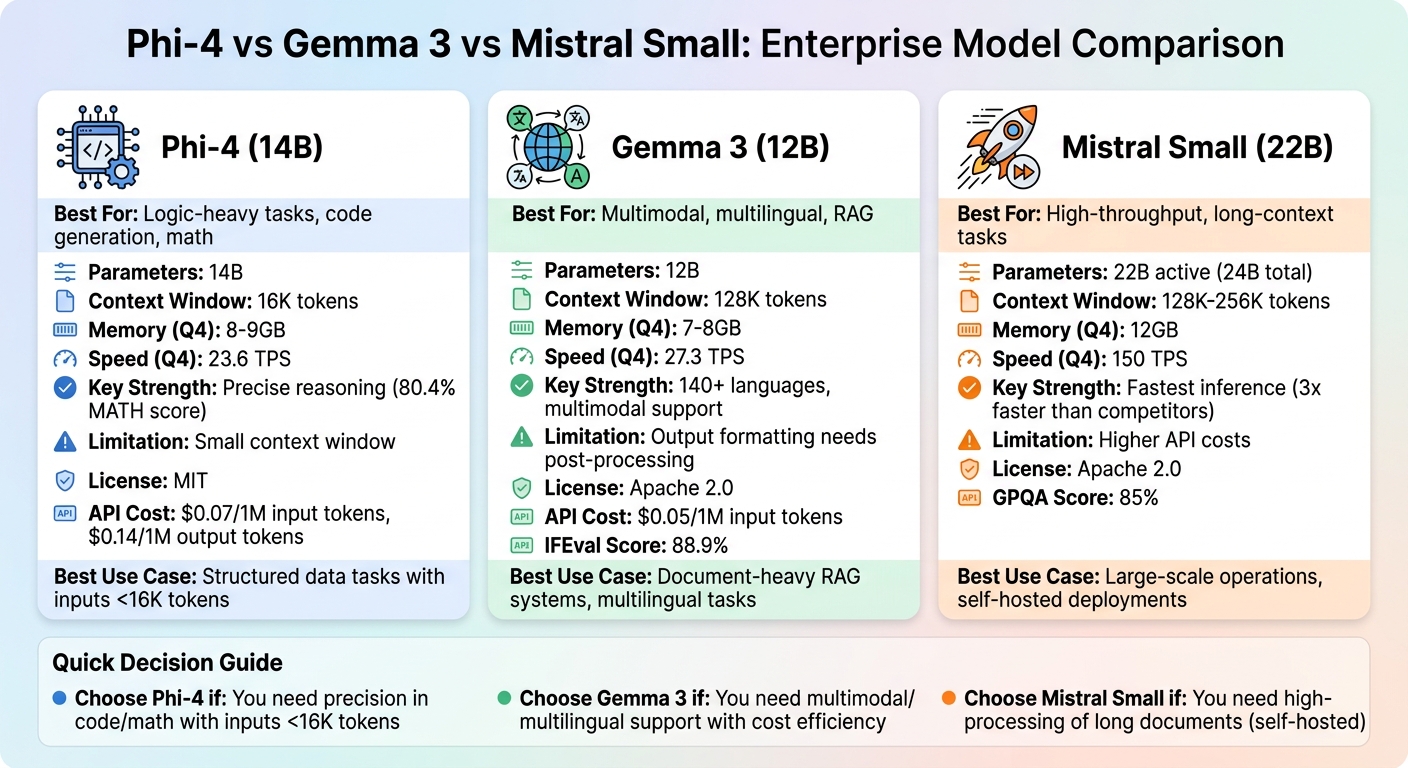

If you’re deciding between Phi-4, Gemma 3, and Mistral Small for enterprise use, here’s the bottom line:

- Phi-4 (14B): Best for tasks needing precise reasoning, math, and code generation. Works efficiently with lower memory (8–10GB at 4-bit quantization) but is limited by a 16K token context window.

- Gemma 3 (12B): Ideal for multilingual, multimodal tasks and long-document processing with a 128K token window. Balances cost and performance, but outputs may need some post-processing.

- Mistral Small (22B): Excels in high-throughput, long-context tasks (up to 256K tokens) with fast inference speeds (150 tokens/second). Great for large-scale workflows, especially when self-hosted.

Quick Comparison

| Model | Best For | Key Limitation | Memory (Q4) | Speed (Q4) |

|---|---|---|---|---|

| Phi-4 | Logic-heavy tasks, code, math | 16K context window | 8–9GB | 23.6 TPS |

| Gemma 3 | Multimodal, multilingual, RAG | Output formatting | 7–8GB | 27.3 TPS |

| Mistral Small | High-throughput, long-context | Higher API costs | 12GB | 150 TPS |

Key Takeaway

Choose Phi-4 for precision in structured data tasks, Gemma 3 for versatility in document-heavy or multimodal use, and Mistral Small for large-scale operations with long documents. Each model suits specific enterprise needs, so align your choice with your workflow requirements.

Phi-4 vs Gemma 3 vs Mistral Small: Enterprise AI Model Comparison

1. Phi-4

Document Q&A Performance

Phi-4 stands out when it comes to extracting specific details from dense and logic-heavy documents. Thanks to its training on high-quality synthetic data, it handles complex reasoning and structured output exceptionally well [8]. For instance, Phi-4 scored 80.4% on MATH (compared to GPT-4o’s 74.6%) and 56.1% on GPQA (versus GPT-4o’s 50.6%), showcasing its strength in document Q&A tasks [3].

However, its 16K token context window is a notable limitation. This is quite small when compared to competitors like Gemma 3’s 128K or Mistral Small’s 256K [7][9][3]. For enterprise-level retrieval-augmented generation (RAG) applications, Phi-4 is best suited for processing smaller document chunks where precision is more important than handling large volumes. If you’re tackling extensive legal contracts or multi-document analyses, you’ll need to design workflows that account for this constraint. Let’s now explore how Phi-4 performs in structured extraction tasks.

Classification and Structured Extraction

Phi-4’s built-in function-calling capabilities make it a strong contender for structured data transformation and entity extraction [8]. It performs particularly well when working with smaller text segments. Its scores – 84.8% on MMLU and 70.4% on MMLU-Pro – highlight its solid general knowledge and reasoning skills [7][3].

That said, its instruction-following consistency could be better. With an IFEval score of 63.0%, it falls short of Gemma 3’s 88.9% [7][3]. This means you’ll need to craft precise prompts or provide few-shot examples to get reliable results, especially for tasks that require strict formatting or intricate logic. Next, we’ll dive into its performance in code generation and technical documentation.

Code Assistance and Context Handling

Phi-4 holds its own in code generation and technical documentation tasks. It processes at an inference speed of around 33–35 tokens per second [7][9], with a knowledge cutoff date of June 1, 2024 [7]. Its MIT license is a major advantage, offering full flexibility for commercial use without restrictions on modification [7][3].

"Phi-4 (14B parameters), through carefully curated synthetic data and high-quality corpora, surpassed many 70B-class models on math reasoning, logical analysis, and code generation." – Meta Intelligence [8]

In addition to its raw capabilities, its resource efficiency plays a significant role in practical deployment, as outlined below.

Inference Speed and Memory Footprint

Phi-4 requires about 28GB of VRAM at FP16 precision, but 4-bit quantization reduces this to just 8–10GB [3]. Cost-wise, input tokens are priced at $0.07 per 1M tokens, while output tokens cost $0.14 per 1M tokens [7]. For self-hosting, the annual cost for a single-GPU server is estimated at around $11,200, making it more cost-effective than GPT-4o’s API if your daily volume exceeds 15,000 requests [8].

On the downside, Phi-4’s multilingual support is limited compared to Gemma 3, often requiring fine-tuning for non-English enterprise documents [8][4]. Additionally, it is strictly text-based, with no native support for images or audio [7].

sbb-itb-51b9a02

2. Gemma 3

Document Q&A Performance

Gemma 3 stands out in enterprise applications with its 128K token context window, making it a powerful tool for processing large, multi-section documents [8]. This extensive window allows it to handle complex documents efficiently. Its IFEval score of 90.2% highlights its strong instruction-following capabilities [10]. For domain-specific queries, particularly those involving visual elements like charts, tables, or diagrams, the 4B variant performs well, scoring 75.8% on DocVQA [10]. This is largely due to its built-in multimodal support [8]. Additionally, Gemma 3 offers a more budget-friendly alternative compared to Phi-4, making it a practical choice for businesses.

Classification and Structured Extraction

In production scenarios, both the 4B and 12B variants of Gemma 3 achieve a perfect 100% JSON parse rate. However, the 4B model outshines the 12B in schema compliance, with an 87% compliance rate compared to the 12B’s 43.5% [5]. The 4B variant also generates extra commentary around JSON outputs, reducing the need for intensive post-processing to meet strict schema requirements. By comparison, Llama 3.1 8B achieves a higher 95.7% schema compliance [5], but Gemma 3’s performance remains competitive, especially for environments prioritizing cost and efficiency.

Code Assistance and Context Handling

Gemma 3 also excels in code assistance and managing extended contexts. Supporting 140+ programming languages, it is ideal for multilingual code documentation and internal tools [8]. Its Grouped-Query Attention (GQA) architecture ensures efficient handling of extended contexts. A real-world example includes a 1B variant deployment in European retail using WebAssembly, where it provided multilingual support with a time-to-first-token ranging from 200 to 500 milliseconds [4]. This demonstrates its capability to deliver quick responses in diverse, multilingual environments.

Inference Speed and Memory Footprint

Gemma 3 is available in multiple sizes – 1B, 4B, 12B, and 27B – catering to various hardware setups [2]. The 4B variant, with Q4_K_M quantization, achieves 71.6 tokens per second while requiring just 3GB of VRAM. Switching from Q8 to Q4 quantization boosts throughput from 49.1 to 71.6 tokens per second, all while maintaining a perfect JSON parse rate [5][6]. For real-time applications, the 4B variant is an excellent choice due to its balance of speed and resource efficiency. On the other hand, the 12B variant, which requires 8.1GB of VRAM and processes 27.3 tokens per second with a latency of 10,545 ms, is better suited for batch processing tasks [5]. These metrics underline the flexibility and performance of Gemma 3 across different enterprise use cases.

3. Mistral Small

Mistral Small uses a specialized architecture designed to handle enterprise-level document processing while keeping deployment costs manageable.

Document Q&A Performance

Mistral Small 3.1 delivers 85% accuracy on GPQA benchmarks, surpassing Gemma 3’s 80% accuracy rate [12]. Its 128K token context window (expandable to 256K in version 4) allows for complex multi-document synthesis and long-form retrieval-augmented generation, a huge step up from Phi-4’s 16K token limit [11][12][13]. Versions 3.1 and 4 also include native vision support, enabling the analysis of document images, charts, and UI screenshots without requiring separate OCR tools [12][13]. Additionally, its Sliding Window Attention architecture ensures memory usage remains efficient, even with longer documents (8K–32K tokens), making it particularly effective for processing detailed legal and technical files [1].

Classification and Structured Extraction

Beyond its Q&A capabilities, Mistral Small 3 shines in structured data extraction. It includes built-in support for function calling and JSON mode, which are vital for automating workflows in enterprises [1][11]. Its open-source Apache 2.0 license provides flexible commercial use, avoiding user restrictions found in Meta’s Llama licenses or training limitations in Google’s Gemma terms [3][13]. A notable example of its utility occurred in March 2026, when European government agencies adopted Mistral Small for on-premise processing of citizen submissions and regulatory filings. Running on a 32-core server, it classified documents, extracted data, and generated summaries, handling 50–80 documents per minute while adhering to GDPR requirements [4]. Its hybrid attention mechanism, combined with sparse matrix optimization, ensures high accuracy for specialized fields like medicine and law [12].

Inference Speed and Memory Footprint

Mistral Small 3 excels in efficiency, achieving an inference speed of 150 tokens per second, over three times faster than Llama 3.3 70B on the same hardware [12][14]. Its reduced layer count significantly improves forward pass times [14]. In terms of memory, the model requires roughly 44GB of VRAM at FP16 precision, but 4-bit quantization cuts this down to about 12GB, making it deployable on consumer-grade hardware like an RTX 4090 [1][14]. Version 4 introduces a Mixture of Experts architecture with approximately 22B active parameters (119B total), requiring around 238GB of VRAM in FP16 or 60GB in 4-bit quantization for full deployment [13]. For API use, Mistral Small 3 is priced competitively at $0.05 per million input tokens and $0.08 per million output tokens, offering a 30% cost advantage over Phi-4 [11][12]. This combination of speed, cost-efficiency, and flexibility makes it an excellent choice for high-volume, on-premise operations.

| Quantization Level | Memory Footprint | Hardware Compatibility |

|---|---|---|

| FP16 (Unquantized) | ~44GB | Enterprise GPUs (A100/H100) |

| 4-bit (Quantized) | ~12GB | Consumer GPUs (RTX 4090), MacBooks (32GB RAM) |

Pros and Cons

Here’s a breakdown of the strengths and weaknesses of each model, focusing on key criteria for enterprise deployment.

Phi-4 is a standout for tasks that demand precise reasoning and code generation. It operates efficiently with 8–9GB of VRAM at 4-bit quantization, delivering a throughput of 23.6 tokens per second. However, its 16K token context window can be a limiting factor for processing long-form documents. This makes it a strong choice for logic-heavy tasks with shorter inputs but less suitable for extended workflows.

Gemma 3 shines with its multimodal capabilities and support for 140 languages. It offers higher throughput and is more cost-effective, charging $0.05 per 1M input tokens compared to Phi-4’s $0.07 [5][7]. Running on 7–8GB of VRAM at 4-bit quantization, it achieves 27.3 tokens per second. While outputs might need minor post-processing, its 88.9% IFEval score reflects strong consistency in following instructions [7]. This makes it a great fit for multimodal tasks and budget-conscious deployments, particularly in document-heavy retrieval-augmented generation (RAG) systems.

Mistral Small focuses on high-throughput, long-context tasks while keeping memory usage efficient at 12GB VRAM with 4-bit quantization [1][14]. It excels at structured data extraction with built-in function calling and JSON mode. Additionally, its Apache 2.0 license allows unrestricted commercial use [1][11]. While API pricing for its newer versions can be higher, self-hosting offers a cost-effective solution for large-scale operations [9][11][12]. This model is especially effective for enterprise workflows involving extensive European-language document processing.

Here’s a quick comparison of the key trade-offs:

| Model | Best For | Key Limitation | Memory (Q4) | Speed (Q4) |

|---|---|---|---|---|

| Phi-4 | Math, logic, code generation | 16K context window | ~8–9GB | 23.6 TPS |

| Gemma 3 | Multimodal tasks, long documents, RAG | Requires output stripping | ~7–8GB | 27.3 TPS |

| Mistral Small | High-throughput enterprise workflows | Higher API costs | ~12GB | 150 TPS |

When deciding on a model for production use, Phi-4 is best for scenarios with inputs under 16K tokens that need precise logic. Gemma 3 is the go-to for multimodal tasks and document-heavy RAG systems where cost matters. Meanwhile, Mistral Small is ideal for high-volume operations, particularly for lengthy European-language documents, and is most economical when self-hosted [1][8].

Conclusion

Select your model based on the specific demands of your workload, not just generic benchmarks. The recommendations below are tailored to meet the practical requirements of enterprise-level deployments.

For code-related tasks and complex structured data extraction, go with Phi-4 (14B). It boasts an impressive 82.6% HumanEval score [6] and excels in debugging, code generation, and schema mapping with inputs up to 16K tokens. It operates efficiently, requiring approximately 10 GB of VRAM at Q4 quantization, and comes with the flexibility of an MIT license for redistribution.

For document-based Q&A and high-throughput scenarios, Mistral Small 3.1 (24B) strikes the perfect balance. It handles 128K context windows at a speed of 150 tokens per second, all while maintaining a factual consistency score of 0.762 [5][14]. With self-hosting on a 24 GB GPU, enterprises processing over 15,000 requests daily can significantly reduce costs [1].

For multimodal tasks or global-scale deployments, Gemma 3 is the standout choice. It supports native vision capabilities and covers over 140 languages [15]. Its 128K context window outperforms Phi-4’s 16K limit for long-document retrieval-augmented generation. Additionally, its Apache 2.0 license and efficient architecture make it an excellent option for cost-conscious, high-speed deployments [15].

Enterprise-specific SLMs deliver 90% of GPT-4’s performance on 80% of tasks while cutting costs by 86% through self-hosting [1][4]. Focus on your production needs – whether it’s context length, speed, or domain-specific tasks – rather than relying solely on benchmark scores. Choosing the right model for your operational requirements will help you achieve the best return on investment.

FAQs

Which model is easiest to run on a single GPU in production?

The Phi-4 Mini stands out as the simplest model to deploy on a single GPU for production use. Thanks to its optimized design and 4-bit quantization, it requires just around 3GB of VRAM. This makes it a perfect fit for consumer-grade GPUs, such as the RTX 4090.

While alternatives like Gemma 3 and Mistral Small demand between 3GB and 5GB of VRAM, the Phi-4 Mini’s efficiency ensures it remains the most practical option for systems with limited hardware resources.

How should I chunk long documents for RAG if the model has a short context window?

To handle lengthy documents in a Retrieval-Augmented Generation (RAG) setup, especially when working with models that have short context windows, the key is to break the document into smaller, manageable chunks. These chunks should align with the model’s maximum token limit (e.g., 8K–32K tokens). To keep the content coherent, use natural divisions like paragraphs or sentences when splitting the text.

Each chunk is processed independently by the model. To ensure context isn’t lost between sections, consider creating overlapping chunks. This overlap helps maintain continuity and avoids misinterpretation. Once all chunks are processed, their outputs can be combined or linked to form a complete and cohesive response.

How do I enforce reliable JSON/schema outputs across repeated runs?

Creating reliable JSON or schema outputs requires a mix of precise instructions, leveraging model capabilities, and thorough validation. Here’s how you can achieve consistent results:

- Start with clear prompts: Provide explicit instructions like "produce output in valid JSON format matching schema X" and include examples. This helps guide the model toward the desired structure.

- Use model features effectively: Tap into reasoning modes or structured output capabilities, which many models offer, to improve accuracy and consistency.

- Validate programmatically: Use JSON schema tools to check the outputs against your schema. If errors arise, rerun the process or make corrections as needed.

By combining clear instructions, thoughtful use of model features, and rigorous validation, you can significantly improve the reliability of JSON/schema outputs.

Related Blog Posts

- LangGraph vs CrewAI vs AutoGen: How We Evaluated All Three Before Recommending One for a Production Deployment

- The Evaluation Framework We Use When a Client Asks Us Which Agent Stack to Build On

- Tool Calling Reliability Across Agent Frameworks: What We Measured and What It Means for Your Architecture

- Agent Memory Architecture: Why Your Design Decision at Week One Determines Your Ceiling at Scale

Leave a Reply