The Hidden Cost of ‘Move Fast and Break Things’ When Your System Already Has 200K Users

Huzefa Motiwala March 25, 2026

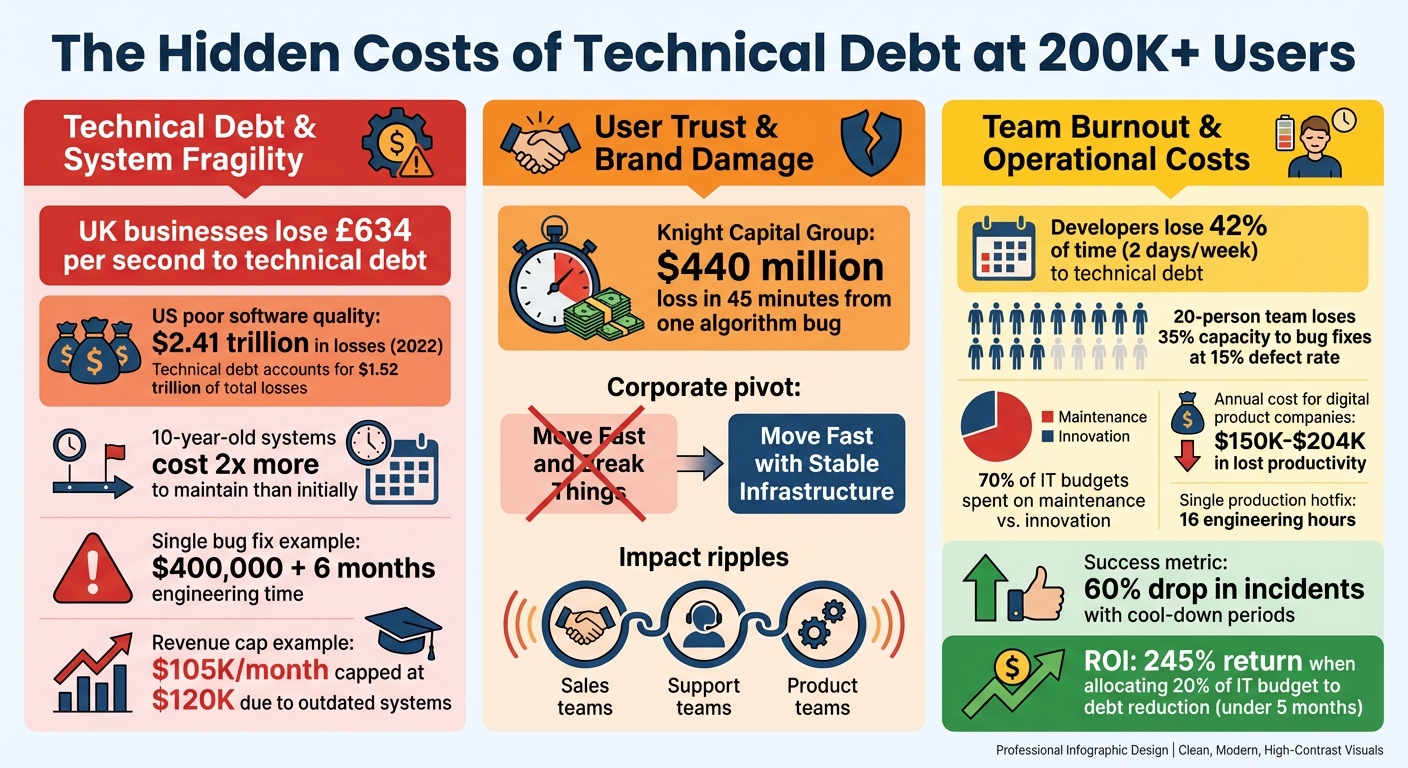

When your user base grows beyond 200,000, the "move fast and break things" mindset can backfire. At this scale, even small bugs can lead to massive time losses, eroded trust, and overwhelmed teams. Here’s what you need to know:

- Technical Debt: Quick fixes in the early days pile up, making systems fragile and costly to maintain. A single bug can cost $400,000 and months of work.

- User Trust: Frequent outages or bugs frustrate users, turning them into unpaid testers and damaging your reputation.

- Team Burnout: Developers spend up to 42% of their time on maintenance, leaving little room for innovation.

Key Solutions:

- Prioritize Stability: Shift focus to "stable infrastructure" for critical systems like payments or authentication.

- Gradual Refactoring: Use strategies like the Strangler Fig Pattern to modernize systems incrementally without disrupting operations.

- AI Tools for Diagnostics: Leverage AI to identify weak points in your codebase, reducing guesswork.

- Traffic Light Roadmap: Categorize fixes by impact and risk to tackle high-priority issues first.

- Allocate Resources: Dedicate 20% of each sprint to reducing technical debt to regain development speed.

The takeaway? Scaling without addressing technical debt is unsustainable. By balancing speed and stability, you can grow without breaking your system – or your team.

How to manage critical technical debt at scale

sbb-itb-51b9a02

The Real Costs of Prioritizing Speed Over Stability

The True Cost of Technical Debt at Scale: Key Statistics and Impact Areas

When your user base surpasses 200,000, the hidden expenses of prioritizing speed over stability become impossible to ignore. What once seemed like theoretical risks turn into measurable, costly problems. These costs emerge in three key areas: a fragile technical foundation, declining user trust, and an overburdened team.

Tech Debt and Fragile Systems

Technical debt is like compound interest – it grows over time and becomes harder to manage. For instance, a 10-year-old system can cost twice as much to maintain as it did initially [6]. In the UK, technical debt costs businesses an estimated £634 per second, while in the U.S., poor software quality led to losses exceeding $2.41 trillion in 2022, with technical debt accounting for $1.52 trillion of that total [1][6].

Ward Cunningham, who introduced the term "technical debt", explained its impact:

Shipping first-time code is like going into debt. A little debt speeds development so long as it is paid back promptly with refactoring… Entire engineering organizations can be brought to a stand-still under the debt load of an unfactored implementation [6].

The consequences are clear. A business earning $105,000 per month might find its revenue capped at $120,000 due to outdated systems, risking collapse with further growth [7]. Tasks that once took hours now drag on for weeks, and bug fixes often cause new problems [6]. Eventually, teams hit "Zero Velocity", where maintaining the system absorbs all engineering resources, leaving no room for new features [2].

Consider this: fixing a single race condition that had been copy-pasted 47 times in a codebase cost one company $400,000 and six months of engineering time [8]. These rushed decisions, repeated over time, create a fragile system that frustrates users and exhausts teams.

User Churn and Brand Damage

When users become the ones finding bugs instead of a QA team, trust erodes quickly [4]. Technology consultant Eric Brown summed it up:

Customer trust erodes when they become your de facto QA team [4].

The financial and reputational damage can be devastating. Take the case of Knight Capital Group in 2012: a new trading algorithm containing old, unused code caused billions of dollars in erroneous trades in just 45 minutes, resulting in a $440 million loss and the end of the company’s independence [10].

Even Facebook, once known for its "move fast and break things" mantra, had to rethink its approach. In 2014, CEO Mark Zuckerberg shifted the company’s focus to "Move fast with stable infrastructure" as the platform grew to billions of users. The risks of instability had become too great [10].

Frequent outages don’t just annoy users – they ripple across the entire organization. Sales teams lose confidence in pitching new features, support teams drown in complaints, and product teams question the engineering department’s reliability [9]. The instability doesn’t just harm customer trust; it inflates operational costs and spreads stress throughout the company.

Operational Costs and Team Burnout

Fragile systems don’t just cost money – they drain time and energy. Developers lose 42% of their time, or about two days per week, dealing with technical debt [6]. At a defect leak rate of 15%, a 20-person team might lose 35% of its capacity to bug fixes, often referred to as the "Janitor Tax" [2].

Most organizations spend 70% of their IT budget on maintenance rather than innovation [6]. For a digital product company, patching legacy systems can cost $150,000–$204,000 annually in lost productivity and crisis management [7]. A single production hotfix requires about 16 engineering hours, or two full workdays, across triage, root cause analysis, and deployment [2].

This constant firefighting takes a toll. Engineers are stuck switching between developing new features and fixing rushed implementations. As software engineer Hui Supat puts it:

The machine is only humming because it’s burning [2].

Companies that measure technical debt financially and schedule "cool-down periods" have seen production incidents drop by as much as 60% [4]. Those that allocate 20% of their IT budget to reducing technical debt often achieve a 245% return on investment within just under five months [6]. The numbers don’t lie: investing in stability isn’t optional – it’s essential for long-term survival at scale.

How to Find and Fix System Weak Points

Fixing hidden issues in a system with 200,000 users isn’t just costly – it’s also risky. But modern tools can uncover weak points and help prioritize fixes with precision.

Using AI-Agent Assessments to Map System Health

AI-based assessments are a game-changer for diagnosing system health. These tools analyze your codebase to reveal weak spots that affect performance, cause outages, or add unnecessary complexity. They don’t just flag "bad code" but also highlight architectural bottlenecks, security vulnerabilities, performance issues, and areas of technical debt [13].

Take the example of Mark Mishaev, an engineer who used an AI Harness Scorecard in February 2026 to review his open-source repository. Initially, the repository scored a disappointing 34/100 (F). The issues? Missing type checks, absent security scans, and undocumented architectural decisions. After implementing strict type checking with mypy, running dependency audits using pip-audit, and creating agent instructions (AGENTS.md), the score shot up to 92/100 (A) – all within just a few hours [13].

This assessment uses 31 checks inspired by DORA 2025 research. It evaluates whether your CI pipeline catches errors before deployment, if your architecture is well-documented, and whether your dependencies are up-to-date [13]. It also flags structural risks like "The Hairball" (overly coupled modules that overwhelm AI token limits) and "The Butterflies" (god objects like utils/index.ts that amplify the impact of changes) [15].

"We focus on whether the AI can generate correct code, when we should be asking whether the repo can catch incorrect code regardless of who wrote it." – Mark Mishaev, Engineering Leader [13]

Another method is behavioral code analysis. Tools like CodeScene identify areas of high complexity combined with frequent changes, which are typically fragile. Research shows that in chaotic codebases, 20% of files are responsible for 80% of code changes [11][12]. These are the areas most likely to cause issues.

Want to start small? Use this Git command:

git log --since="120 days ago" --name-only This will list the top 50 files with the most activity. Then, combine this with runtime telemetry from tools like Sentry or GA4 to identify modules that generate the most errors. The overlap between these two data sources reveals your system’s most fragile areas [11][12].

These insights set the stage for prioritizing fixes.

Traffic Light Roadmaps for Issue Prioritization

Once you’ve identified the problem areas, the next step is deciding what to fix first. The Traffic Light Roadmap is a simple but effective way to categorize issues based on two factors: Business Impact (how much it disrupts operations) and Remediation Risk (how hard or risky it is to fix) [14][16].

Here’s how the roadmap breaks down:

| Traffic Light Status | Impact / Risk Profile | Action |

|---|---|---|

| Critical (Red) | High Impact / Low Risk | Fix immediately – allocate 2–4 hours per sprint [14][16] |

| Managed (Yellow) | High Impact / High Risk | Requires strategic planning, such as an RFC and team buy-in [14][16] |

| Scale-Ready (Green) | Low Impact / Low Risk | Fix opportunistically when updating nearby code [14][16] |

For example, a module with a 30% change failure rate is a critical risk and needs immediate attention. One project revealed how unmanaged technical debt led to deploy times of 47 minutes and a simple CSS fix taking two full days – clear signs of critical issues [11].

"Technical debt isn’t ‘bad code.’ It’s the gap between your system’s current state and the state it needs to be in to meet business goals." – CodeIntelligently [11]

To build your roadmap, pull debt items from sprint retrospectives, incident post-mortems, and developer surveys. Score each issue on a 1–10 scale for Business Impact (e.g., delays, incidents, morale, customer complaints) and Remediation Risk (e.g., system complexity, rollback challenges). Then, map them to the appropriate category [14][16].

Start with Quick Wins – high-impact, low-risk fixes like adding database indexes or resolving race conditions. These are easy victories that build momentum and earn support for tackling more complex issues [14]. For "Managed" items, consider the Strangler Fig pattern: implement new solutions alongside the old ones, gradually transitioning traffic to ensure stability [11][14].

Keep a living Technical Debt Register to track the location, severity, business impact (in terms of dollars or lost engineering hours), and effort required for each issue [11]. When seeking buy-in, frame the debt in business terms. For instance, "This module adds 3 hours to every feature, costing $X per month" [11][17]. Companies that actively address technical debt can boost feature delivery speed by up to 40% [17].

How to Scale Without Breaking Your System

Scaling up without disrupting your system is a delicate balancing act. After identifying and prioritizing system issues, the key isn’t to start from scratch. Instead, incremental modernization allows you to upgrade your system piece by piece while keeping it operational. This approach ensures you can continue innovating while maintaining stability as your user base grows.

Managed Refactoring: A Smarter Alternative to Full Rewrites

While a full system rewrite might sound tempting, it often leads to risks like multi-year delays, no immediate value, and a freeze on feature development [18]. Instead, Managed Refactoring offers a safer route by gradually replacing legacy modules without affecting end users.

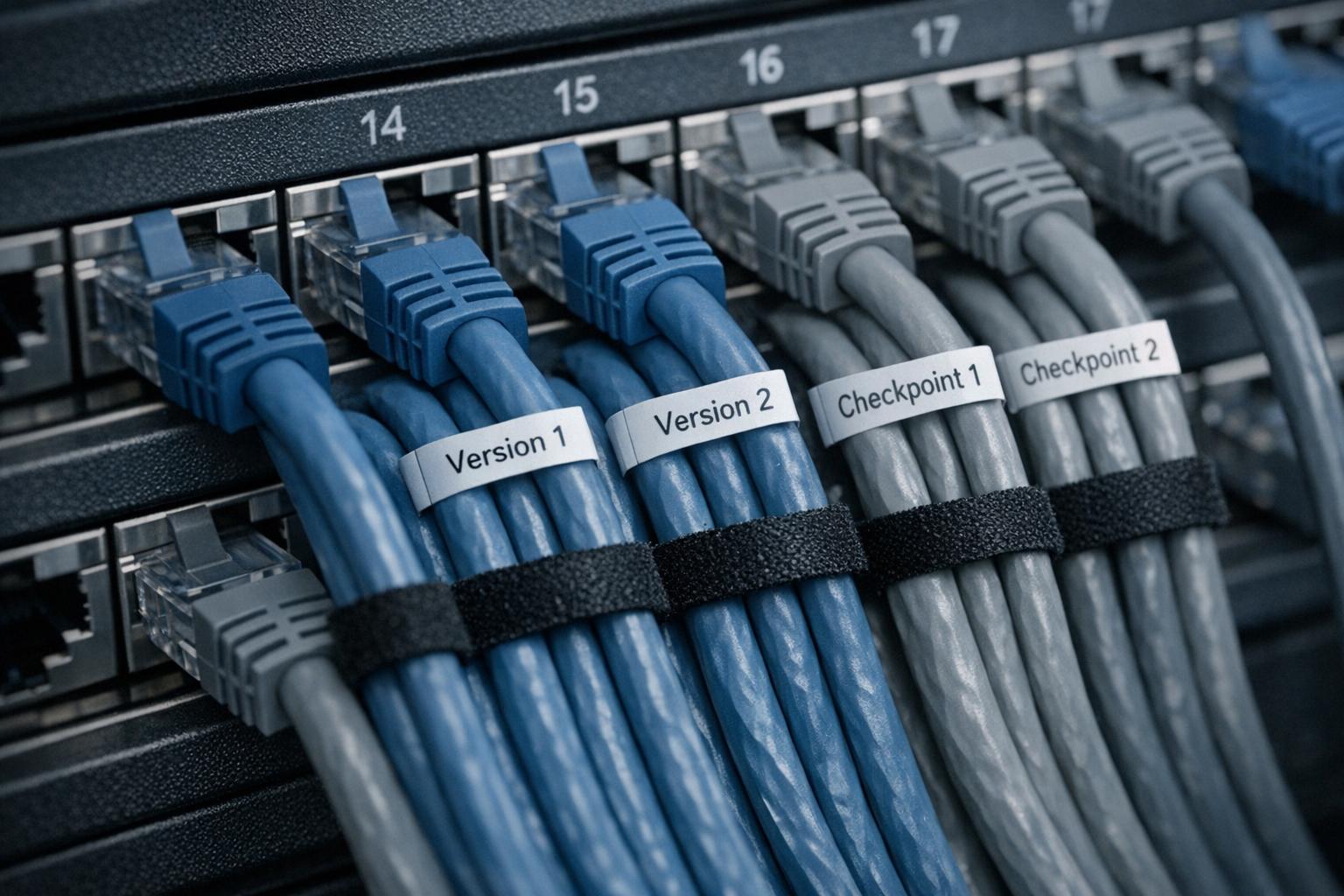

One effective method is the Strangler Fig Pattern, which involves building new modules alongside old ones and shifting traffic over gradually. For example, in July 2024, Airbnb upgraded from React 16 to React 18 using a "React Upgrade System." They employed module aliasing, environment targeting, and A/B testing in production to fix bugs incrementally. This phased approach allowed them to achieve a full rollout with no rollbacks while continuing to release new features [19].

"The most reliable path from legacy to modern architecture is incremental migration rather than a complete rewrite." – Mark Knichel, Vercel [19]

To prioritize which modules to refactor first, use a Strangler Score:

(Change Frequency × Pain Level) / (Business Criticality × Coupling)

Higher scores indicate modules that should be addressed sooner [18].

Another technique is Parallel Runs (Shadow Mode), where old and new systems run simultaneously to validate outputs and catch edge cases before full migration [18][20]. For data migrations, the Dual Write Pattern can be used to write to both old and new databases while gradually shifting read traffic. Here’s how the process works:

| Phase | Data Migration Step | Description |

|---|---|---|

| Phase 1 | Dual Write, Old Read | Write to both databases; old database remains the source of truth for reads. |

| Phase 2 | Dual Write, New Read (Fallback) | Start reading from the new database, but fallback to the old database if data is missing. Backfill missing data. |

| Phase 3 | Dual Write, New Read | Fully read from the new database while continuing to write to both. |

| Phase 4 | Single Write, New Read | Stop writing to the old database and decommission it. |

Gradual rollouts are essential. Begin by routing 5% of traffic to the new system, monitor for errors, then increase to 20%, 50%, and finally 100% over several weeks. This was the strategy Slack used to rebuild its desktop app, resulting in faster launch times and reduced memory usage. Snapchat also re-engineered its Android app, achieving a 20% speed boost and a 25% reduction in app size, which helped reverse user stagnation [21].

To maintain momentum, dedicate 20% of each sprint to refactoring. This "20% Rule" ensures continuous progress without pausing new feature development. Companies using this strategy have reported productivity gains of 20% to 45% [19]. Additionally, document technical shortcuts using Architecture Decision Records (ADRs), specifying triggers for remediation (e.g., "refactor when user count exceeds 10,000") to prevent unresolved debt from becoming a problem later [11][22].

Balancing Speed and Stability with Variable-Velocity Engine

Not all parts of your system need to evolve at the same pace. While core infrastructure – like payment systems or user authentication – demands stability, less critical components, such as UI experiments or marketing pages, can iterate more quickly [3]. This concept is central to the Variable-Velocity Engine (V2E) framework, which adjusts development speed based on system criticality and business needs.

Mark Zuckerberg captured this principle in 2014 with the updated motto:

"Move fast with stable infrastructure." – Mark Zuckerberg, CEO, Meta [3][23]

Identify your non-breakable cores, which are areas where downtime or bugs would directly impact revenue or user trust. These should have stricter testing, code reviews, and deployment gates [3]. For less critical areas, maintain faster iteration cycles to encourage innovation.

To keep your architecture clean, use Architectural Firewalls. These tools enforce boundaries by rejecting pull requests that increase complexity or violate predefined rules. Without these safeguards, even a small defect escape rate can add up – on a 20-person team shipping 10 features daily, a 5% defect rate translates to 40 hours of weekly maintenance [2]. If that rate climbs to 28%, your capacity for innovation drops to zero [2].

Traffic orchestration is another key strategy. Use API gateways to manage traffic flow between old and new systems. Follow these stages for a smooth transition:

| Migration State | Traffic Mode | Description |

|---|---|---|

| Validation | Shadow | Old system handles requests; new system receives a copy to validate outputs. |

| Transition | Canary | Route a small percentage of live traffic to the new system to monitor performance. |

| Finalization | Cutover | Route all traffic to the new system, keeping the old system as a standby. |

| Retirement | Decommission | Fully retire the old system once stability is confirmed. |

This phased approach ensures reversibility – if issues arise, you can roll back immediately [24][19].

Finally, keep an eye on your Rework Rate – the percentage of time spent fixing issues versus building new features. If this exceeds 20%, your development pace may be unsustainable [2]. Use DORA Metrics (Deployment Frequency, Lead Time for Changes, Change Failure Rate, and Time to Restore) to monitor architectural friction and ensure it doesn’t hinder progress [21].

Conclusion: How to Scale Without Breaking Things

What You Need to Remember

Scaling quickly without addressing technical debt can lead to overwhelming maintenance issues. For instance, when a company reaches 200,000 users and prioritizes speed over stability, the focus often shifts from developing new features to simply maintaining the existing system. At a 15% defect leak rate, a 20-person team might find 35% of its capacity consumed by maintenance tasks [2]. If that defect rate rises to 28%, the team’s ability to innovate effectively disappears altogether [2].

To avoid this, keep shipping features but modernize your infrastructure step by step. Strategies like the Strangler Fig Pattern allow you to gradually replace outdated modules without disrupting operations. Tools such as Traffic Light Roadmaps can help you decide what to tackle first, focusing on business impact and risk rather than emotional urgency. Similarly, the Variable-Velocity Engine (V2E) framework emphasizes that not all parts of your system require the same development speed – your payment systems need stability, while marketing pages can evolve more rapidly.

"Quality is structurally cheaper than velocity. ‘Move Fast’ is a high-interest payday loan: it buys optics today and compounds debt tomorrow." – Hui Supat, Engineer [2]

Using these principles, founders can take practical steps to ensure their systems remain stable while scaling.

What Founders Should Do Next

Start by measuring your Rework Rate – the percentage of each sprint spent fixing issues instead of building new features. If it exceeds 20%, your current development pace is unsustainable [2]. Before making major decisions, evaluate your system’s health. AI-Agent Assessments can analyze your codebase to uncover architectural weaknesses, security risks, and areas burdened by technical debt, giving you a clear view of potential vulnerabilities.

Allocate 10–20% of your development team’s capacity specifically to reducing technical debt [19]. Opt for managed refactoring over full-scale rewrites, as the latter has a failure rate of 60–80% [5]. If maintenance already consumes more than 30% of your capacity, consider implementing a Feature Freeze to stabilize the system and prevent further deterioration [2]. The goal here isn’t flawless perfection but rather creating a buffer – a margin of safety – between your system’s capabilities and the demands placed on it. This margin allows you to handle unforeseen challenges without compromising what already works [25].

FAQs

How do I know when “move fast” is hurting us?

When you notice rising technical debt, increasing system fragility, or growing operational risks, it’s a clear sign that “moving fast” is starting to backfire. Frequent bugs, unexpected outages, or constant rollbacks are red flags that speed is coming at the cost of stability. Skipping crucial steps like testing or code reviews might save time upfront but adds layers of hidden complexity, making systems harder to manage in the long run. If deploying new features feels like a gamble or firefighting becomes part of the daily routine, it’s time to rethink your approach and aim for a healthier balance between speed and reliability.

What should we fix first without a risky rewrite?

To make meaningful progress without disrupting the user experience, start with high-impact, low-risk adjustments. These could include refining critical parts of the system to boost stability and performance over time. Instead of diving into large-scale rewrites – which often come with unnecessary risks – focus on smaller, incremental updates. This approach helps stabilize the system while ensuring everything continues to run smoothly.

How can we reduce tech debt without slowing feature delivery?

To tackle tech debt while keeping feature delivery on track, concentrate on structured debt management, careful prioritization, and phased updates. Start by measuring tech debt across important areas to pinpoint the most pressing issues. Then, prioritize based on how much each item impacts the business and the risks involved. Instead of overhauling entire systems, focus on incremental improvements – like updating individual components. This way, teams can enhance system stability step by step without sacrificing development speed. It’s all about finding the right balance between progress and maintaining the system’s overall health.

Related Blog Posts

- The 6-Month Wall: Why AI-Built Apps Start Breaking After 10,000 Users

- Every Startup Has Tech Debt. The Ones That Die Are the Ones That Pretend They Don’t.

- The $1M ARR Refactor Trigger: When Your Codebase Needs to Grow Up

- The AI Productivity Paradox: Your Team Ships More Code but Moves Slower. Here’s Why

Leave a Reply