The AI Productivity Paradox: Your Team Ships More Code but Moves Slower. Here’s Why

Huzefa Motiwala March 20, 2026

Your team is writing more code than ever thanks to AI tools, but progress feels slower. Why? AI speeds up coding but creates new challenges in testing, reviewing, and maintaining code. Here’s the problem in a nutshell:

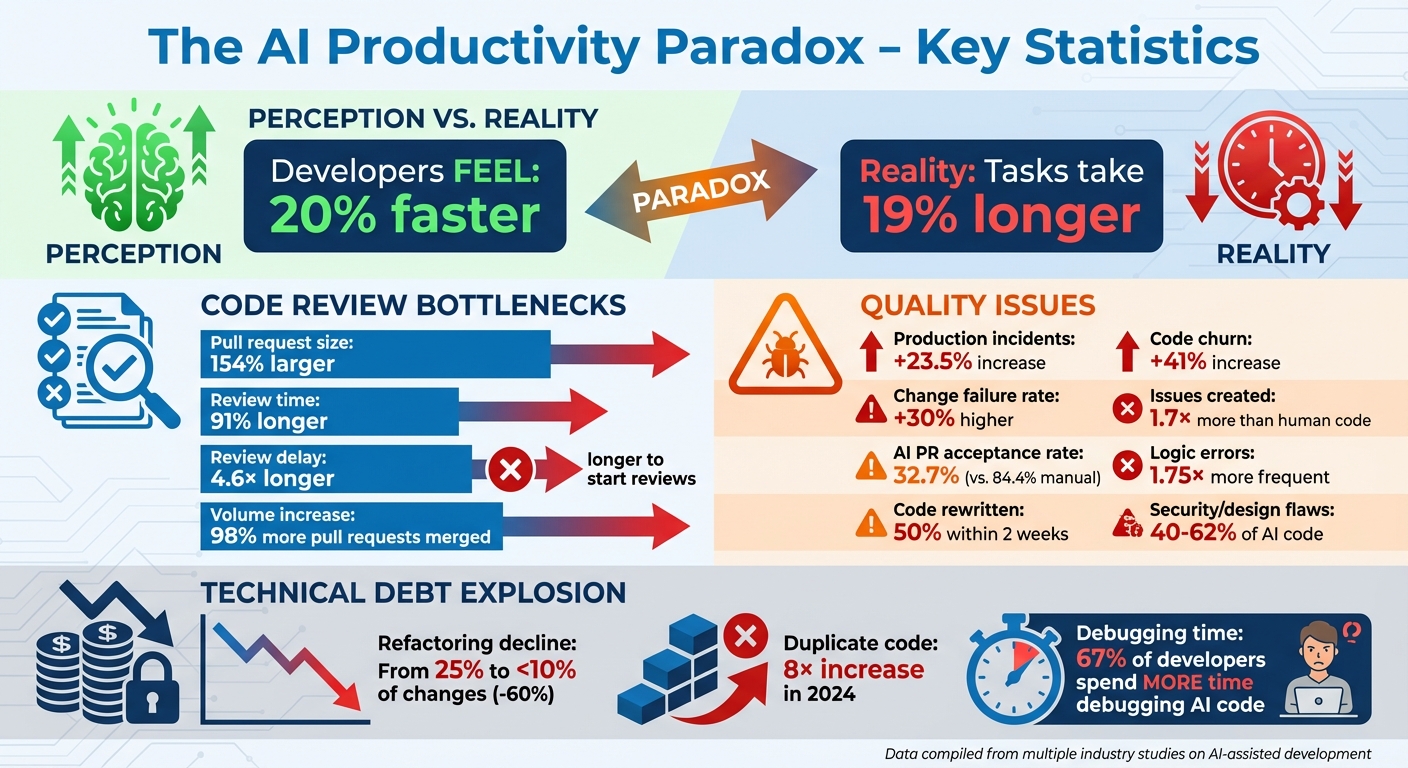

- Perception vs. Reality: Developers feel 20% faster, but tasks take 19% longer.

- Code Review Bottlenecks: AI-generated pull requests are 154% larger and take 91% longer to review.

- Quality Issues: AI code causes 23.5% more production incidents and 30% higher failure rates.

- Technical Debt: Refactoring drops by 60%, while duplicate code increases 8×, leading to unmanageable complexity.

To fix this, focus on balancing speed with long-term stability. Use structured frameworks like AlterSquare’s V2E to manage technical debt, prioritize refactoring at revenue milestones, and customize AI tools for specific tasks instead of overhauling workflows. The goal? Avoid trading short-term gains for long-term problems.

AI Productivity Paradox: Key Metrics Showing Hidden Costs of AI-Generated Code

The AI Productivity Paradox in Engineering | Sonar Summit 2026

sbb-itb-51b9a02

The AI Productivity Paradox Explained

AI tools are speeding up individual coding tasks but slowing down overall project delivery – a contradiction that highlights the tension between personal productivity and team efficiency.

What Developers Feel vs. What Metrics Show

When developers see code generated in real time, it creates a psychological effect often called the "immediate feedback illusion" [1]. This illusion makes the process feel faster and easier because developers spend less time on repetitive tasks, like writing boilerplate code. The reduced mental effort in the early stages of coding can be misleading, masking the real challenges that come later.

The catch is that while AI accelerates routine coding, it shifts the effort to other stages of development. Tasks like code review, testing, and integration become more demanding. Developers often find themselves manually reviewing up to 75% of AI-generated code due to lingering doubts about its quality [2]. Debugging AI-written code can be especially time-consuming because it requires unraveling the AI’s logic, which is often less intuitive than following one’s own thought process [2].

This disconnect between how developers perceive their productivity and the actual outcomes highlights a key challenge in how AI is reshaping development.

How AI Changes Development Workflows

AI is fundamentally altering workflows by shifting the focus from writing code to managing and refining it. Developers are moving from being pure "creators" to acting as "orchestrators" who oversee and improve AI-generated outputs [6]. Studies show that teams using AI merge 98% more pull requests, but these come with 154% larger contributions, 91% longer review times, and a 4.6× delay in starting reviews for AI-generated code [6] [8].

"The bottlenecks are not typing speed. They are design reviews, PR queues, test failures, context switching, and waiting on deployments." – Cerbos [8]

The impact on quality is also concerning. Organizations using AI tools have reported a 23.5% increase in production incidents and a 30% rise in change failure rates. Manual pull requests have an 84.4% acceptance rate, while AI-generated ones succeed only 32.7% of the time. Nearly half of AI-generated suggestions are rewritten within two weeks, leading to a 41% increase in code churn. Meanwhile, refactoring – the essential upkeep that keeps codebases stable – has dropped from 25% of all changes to less than 10%, as teams prioritize rapid feature delivery over long-term stability [2] [5] [8].

The Hidden Costs of AI-Generated Code

AI code generation can speed up production, but it often comes with hidden costs that affect both stability and long-term maintainability.

More Complexity, Less Stability

One of the biggest challenges with AI-generated code is something called comprehension debt. This refers to the growing disconnect between the amount of code in your system and how much of it your team truly understands [9]. While the code may pass tests, hidden logic flaws can still exist, giving a false sense of reliability.

"Comprehension debt is the growing gap between how much code exists in your system and how much of it any human being genuinely understands." – Addy Osmani, Director, Google Cloud AI [9]

Here’s the reality: AI-generated code has been shown to create 1.7× more issues than code written by humans, with logic errors occurring 1.75× more often [2]. Even more concerning, between 40% and 62% of AI-generated code contains security or design flaws [2]. Fixing these issues often requires developers to reverse-engineer the AI’s intent, which can take more time than writing the code from scratch [7].

This lack of understanding and the resulting errors contribute heavily to technical debt.

Technical Debt Builds Faster

AI tools are designed to generate new code quickly, but they often prioritize speed over maintainability. Instead of improving or refactoring existing code, these tools tend to add new layers of logic, creating sprawling and inconsistent codebases. Over time, this leads to a sharp decline in refactoring work – from 25% to less than 10% of all changes – while duplicate code blocks have increased 8× in 2024 alone [5][10].

One particularly tricky issue is the "almost-right" problem. AI-generated code often looks correct and can pass basic tests, but it may still contain subtle flaws or fail to account for important business requirements [7]. Debugging this kind of code can actually take longer than starting fresh because developers first need to figure out what the AI was trying to achieve. In fact, nearly half of AI-generated suggestions are rewritten within two weeks, which has led to a 41% increase in code churn [2][5].

The result? A growing pile of technical debt that becomes harder to manage over time.

Bottlenecks Shift to Testing and Deployment

As technical debt grows, the challenges shift from writing code to testing and deployment. AI-generated code doesn’t eliminate bottlenecks; it just moves them further down the pipeline. This has created what experts call the verification bottleneck, where the pace of code generation far outstrips the ability of teams to review and test it [11][12].

Reviewing pull requests has become a major pain point. With the volume of pull requests skyrocketing, review times have increased by 91%, and senior engineers – who handle 80% of reviews – are often left skimming through complex changes. This increases the risk of bugs slipping through the cracks [5][11][13]. The consequences are clear: production incidents have risen by 23.5%, and change failure rates have jumped by 30% [2][3].

On top of that, 67% of developers report spending more time debugging AI-generated code than debugging code they wrote themselves [13]. This extra effort adds to the strain, making testing and deployment even more challenging.

In short, while AI-generated code might seem like a shortcut, it often creates new hurdles that teams must work harder to overcome.

How AlterSquare‘s V2E Framework Balances Speed and Maintainability

The challenge with AI-driven development isn’t the tools themselves – it’s how they’re used across different stages of growth. AlterSquare’s Variable-Velocity Engine (V2E) addresses this by dynamically adjusting to your business needs. It strategically manages technical debt, speeding up processes when necessary and scaling back for stability when the time is right.

The 3 Stages of V2E

The V2E framework evolves with your business, shifting through three key stages to meet changing demands:

- Disposable Architecture: This stage prioritizes speed, ideal for startups racing to validate product-market fit. At this point, the goal is revenue generation over building a perfect system. AI-generated code often operates as "locally reasonable but globally arbitrary" [16], meaning it’s designed for quick validation rather than long-term use.

- Managed Refactoring: Once revenue starts flowing and growth becomes the focus, this stage takes over. It tackles epistemic debt – the risk that arises when code becomes difficult to modify because the team doesn’t fully understand its logic [14]. Through structured and incremental refactoring, the system is cleaned up to support scaling without disruptions.

- Governance & Efficiency: This phase ensures your system is prepared for scalability and potential exit scenarios. It emphasizes standardizing abstractions, implementing automated verification, and avoiding fragmented integrations [14][16]. Additionally, this stage strengthens infrastructure, enhances security, and refines unit economics for long-term efficiency.

This layered approach ensures that technical debt is identified and addressed early, keeping systems robust as they grow.

Catching Tech Debt Early with Principal Council Review

To manage the balance between speed and stability, AlterSquare enforces early oversight through two layers of review: Principal Council Review and AI-Agent Assessment.

The Principal Council – comprised of Taher (Architecture), Huzefa (Frontend/UX), Aliasgar (Backend), and Rohan (Infrastructure) – reviews systems before any code is written. Their job is to evaluate the architecture’s fit and ensure maintainability, preventing what’s known as the "LGTM reflex", where developers approve AI-generated code without deep scrutiny [15][16]. For high-impact features, they require evidence-gated merges, meaning features must pass independent tests, architecture reviews, or include detailed documentation before being integrated into production [14].

Alongside human oversight, the AI-Agent Assessment scans codebases for indicators like System Trust and Cyclomatic Complexity. A rise in complexity scores signals that AI-generated code could become too difficult to manage safely [14]. The assessment generates a Traffic Light Roadmap to classify issues as Critical, Managed, or Scale-Ready, giving teams a clear understanding of where technical debt is building and when intervention is necessary.

This dual-layered approach ensures systems remain reliable and scalable, even as they evolve.

Practical Steps to Fix the Productivity Paradox

These strategies work alongside the V2E framework to address the challenges of the AI productivity paradox. The goal is to tackle AI’s hidden costs head-on without losing momentum.

Run AI-Agent Scans to Check System Health

Many teams only notice codebase issues after something breaks, which can make fixes far more expensive. AlterSquare’s AI-Agent Assessment can help by scanning your system for problems like architectural coupling, security vulnerabilities, and performance bottlenecks before minor issues escalate into major failures. The scan focuses on two key metrics: System Trust (how safely your team can modify the code) and Cyclomatic Complexity (the number of independent paths in your code). A jump in complexity often signals overly tangled code that’s harder to manage [14].

The assessment generates a Traffic Light Roadmap that categorizes issues as Critical, Managed, or Scale-Ready, making it clear where intervention is needed. Running these scans quarterly or before major releases can help you catch and address problems early.

Once issues are identified, tie your refactoring efforts to key growth milestones for better alignment.

Refactor at Revenue Milestones, Not When Things Break

Refactoring only after a system failure is the most expensive way to address technical debt. Instead, plan your remediation efforts around revenue milestones. For instance, hitting $1M ARR is often a sign that scaling challenges may soon arise [17]. Use this milestone as an opportunity to modernize your system incrementally.

Focus your refactoring on high-velocity areas – sections of code that are updated daily or weekly – while leaving rarely modified parts untouched to avoid unnecessary complexity [18][3]. Consider progressive refactoring, which involves tackling modules one at a time, measuring the results, and then moving forward. This approach is more manageable than sweeping rewrites.

Adopt a two-round rule for AI-generated code: if it takes more than two debugging iterations, switch to manual coding [15]. This ensures teams don’t waste time fixing code that should have been written correctly from the start.

Fit AI Tools to Your Workflow, Not the Other Way Around

To make these changes stick, customize AI tools to fit your current workflow rather than overhauling your processes to suit the AI. A common mistake is restructuring workflows entirely around AI capabilities, which can backfire. Instead, identify tasks that are low-risk but high-value – like research, boilerplate generation, or creating initial test scaffolding – where AI can speed up work without causing bottlenecks.

For critical tasks like payments, security, or regulatory logic, rely on manual development with strict human-in-the-loop validation [14]. Maintain Architecture Decision Records (ADRs) to give AI tools the structured context they need to generate code that aligns with your system’s boundaries [3].

For high-impact features, implement evidence-gated merges that require independent tests, architecture reviews, or detailed documentation before AI-generated code moves to production [14]. Additionally, use automated review gates to catch issues like style violations, SQL injection risks, or hardcoded credentials before a human reviewer even sees the pull request [15][4].

Conclusion

Looking at the hidden costs and challenges, it’s evident that a thoughtful strategy is critical. AI tools may promise faster development – teams reportedly merge 98% more pull requests [5] – but this speed often masks deeper issues. Increased velocity can lead to mounting complexity and technical debt, creating a situation where shipping more code doesn’t necessarily mean delivering better products or achieving business goals.

The answer isn’t to abandon AI tools, but to apply the right boundaries. AlterSquare’s V2E framework is one way to align development practices with your business’s current stage, addressing epistemic debt early to ensure today’s speed doesn’t become tomorrow’s obstacle. As Stanislav Komarovsky aptly said:

"Technical debt makes code hard to change. Epistemic debt makes code dangerous to change." [14]

To build for sustainable growth, teams need architectural safeguards, evidence-based merges, and a shift in focus – from counting lines of code to evaluating system health. Use AI-Agent scans to catch problems early, refactor during revenue milestones instead of after failures, and integrate AI tools into existing workflows rather than overhauling everything around them.

The most successful teams won’t just produce more code – they’ll manage complexity effectively while staying adaptable for the long haul. Striking the right balance between speed and maintainability leads to systems that are both scalable and resilient.

FAQs

How can I tell if AI is slowing my team down?

Signs to watch for include longer sprint cycles, growing review backlogs, or developers spending too much time fixing AI-generated output. If seasoned engineers report a drop in velocity or the team feels more stressed even though tasks are being completed faster, it could mean that AI is introducing more complexity or causing mental fatigue. Focus on overall productivity metrics – not just how quickly tasks are completed – to determine if AI is genuinely aiding progress or creating more hurdles.

What rules should we set for AI-generated pull requests?

To handle AI-generated pull requests effectively, it’s crucial to prioritize thorough code reviews and rigorous testing. These steps help tackle the added complexity and minimize potential bugs. Keep the scope of AI-generated changes narrow and manageable, focusing on tasks like boilerplate code rather than critical logic.

Make sure all changes are well-documented, and clearly define the AI’s role in the development process. This clarity helps developers understand where and how AI is contributing. Regular training for developers is also essential so they can adapt to working alongside AI tools.

Finally, keep a close eye on the AI’s impact through continuous monitoring. This approach ensures quality and stability, striking the right balance between development speed and long-term maintainability.

When should we refactor AI-heavy code during startup growth?

Refactor code reliant on AI when technical debt spirals out of control or begins to hinder scalability and system stability. This becomes particularly important after fast-paced deployments in older systems, as it helps prevent long-term maintenance headaches and supports steady growth.

Related Blog Posts

- Why AI-Generated Code Costs More to Maintain Than Human-Written Code

- The AI Developer Productivity Trap: Why Your Team is Actually Slower Now

- Your Team Ships 2x More Pull Requests Since Adopting AI. Your Bug Count Also Doubled.

- AI Made Our Best Developers 3x Faster. It Made Everyone Else a Liability

Leave a Reply