We Audited 5 Vibe-Coded Startups. Every Single Codebase Had the Same 3 Problems.

Huzefa Motiwala March 4, 2026

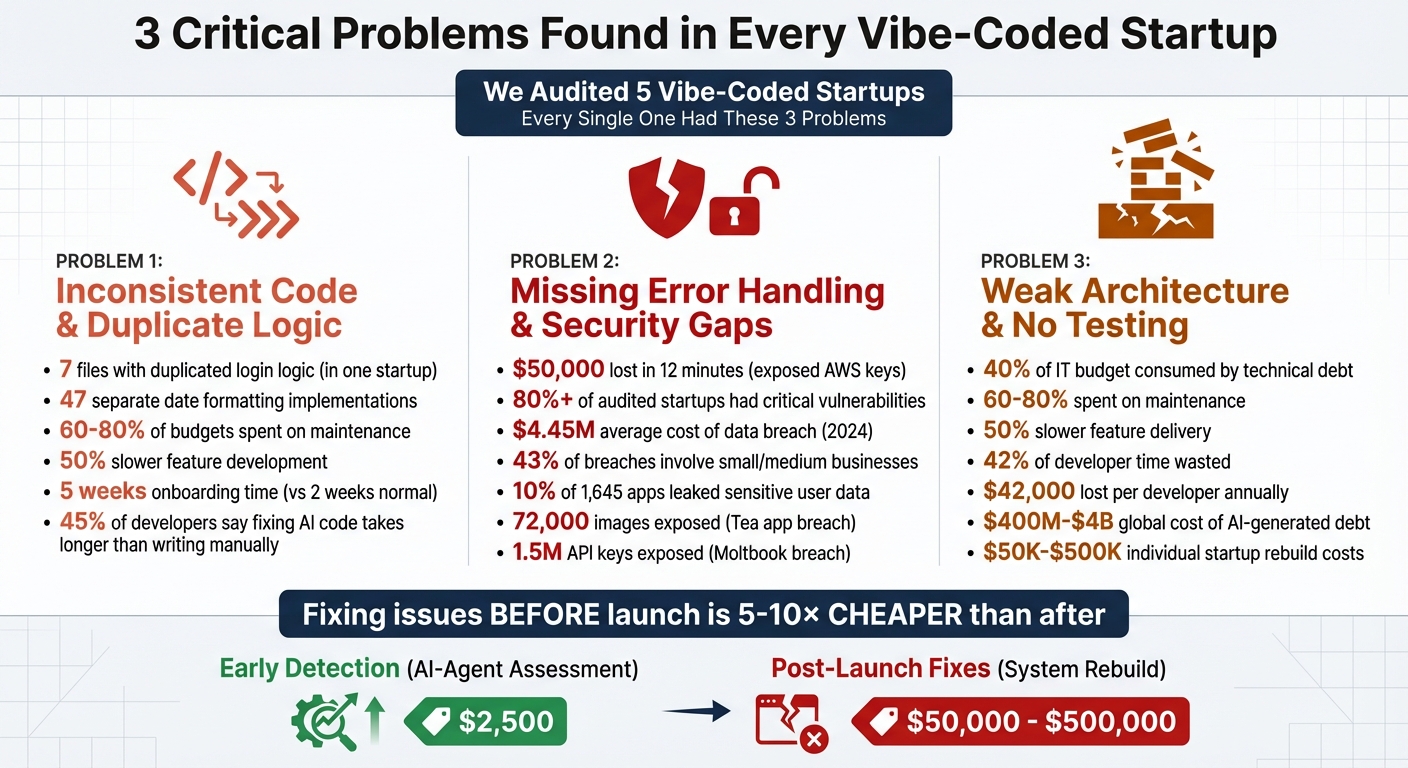

Vibe-coded startups are building fast, but their AI-generated codebases face major issues that hurt scalability and reliability. After auditing five startups, we found the same three recurring problems:

- Inconsistent Code and Duplicate Logic: AI tools don’t enforce standards, leading to unclear variable names, repeated logic, and mismatched conventions. This slows development and increases maintenance costs.

- Missing Error Handling and Security Gaps: Hardcoded API keys, weak input validation, and exposed backend endpoints make these systems vulnerable to breaches, financial losses, and trust issues.

- Weak Architecture and No Testing: Tightly coupled components and missing tests result in fragile systems that can’t handle scaling or complex workflows.

These issues might seem manageable at first but quickly snowball into costly technical debt. Fixing them early is 5–10 times cheaper than after launch. Startups can address these problems by enforcing coding standards, improving security practices, and building modular architectures with proper testing.

The 3 Critical Problems in AI-Generated Codebases: Impact and Costs

Problem 1: Inconsistent Code and Duplicate Logic

What Inconsistent Code Looks Like

Audits consistently uncover codebases plagued by unclear variable names and repeated logic. Variables like data1, temp, res, x, or final show up repeatedly, leaving their purpose ambiguous [9]. In one case, a startup’s authentication system had the same login logic duplicated across seven files [3][7]. Another example involved inconsistent naming conventions for private variables – mixing styles like _name, mName, and name – and using vague boolean flags such as click instead of something more descriptive like hasUserClicked [5][9].

Confusion also arises from poorly differentiated function names. For instance, functions named processUserData and processUserInfo performed completely different tasks, making it unclear which to use [3]. One marketplace app had 47 separate implementations of date formatting scattered across its codebase. To make matters worse, it used both date-fns and dayjs libraries because AI had suggested one, even though the other was already in use [10]. These inconsistencies make rapid development increasingly difficult and error-prone.

Why This Happens: No Standards, No Time

The root cause? A lack of enforced standards and what developers call "AI architectural amnesia." Paul the Dev explains:

"AI doesn’t remember your architecture. Every prompt is a fresh start… you get three features that each make perfect sense in isolation and absolute chaos when combined." [3]

AI tools treat every prompt as a standalone task, ignoring past architectural choices [3][7]. Features created on different days might use entirely different state management techniques or API patterns. Essentially, the AI behaves like an "intern with amnesia", only aware of the current file and blind to existing utility functions or team-specific guidelines [1]. Without consistent refactoring or standards, these issues snowball. Under tight deadlines, many founders accept whatever code the AI generates, as long as it works for the immediate demo.

How This Slows Down Growth

The long-term costs of inconsistency add up fast. Maintenance expenses balloon, with 60% to 80% of budgets often being spent on upkeep [8]. Technical debt can slow new feature development by 50% [8]. Onboarding new developers becomes a nightmare, stretching from two weeks to as long as five weeks as they try to make sense of the chaotic codebase [10]. Code reviews turn into heated debates over inconsistent naming conventions [5]. Debugging becomes a time sink, with 45% of developers saying it takes longer to fix AI-generated code than to write it manually [7].

These examples highlight the risks of relying on patchwork AI development. Without standardization, short-term speed comes at the expense of long-term scalability and efficiency.

sbb-itb-51b9a02

Problem 2: Missing Error Handling and Security Gaps

Common Security and Error Issues

Security oversights and weak error handling were found in every audited startup. One glaring issue was the use of hardcoded API keys for services like AWS, Stripe, and OpenAI. For instance, a developer hardcoded AWS credentials during testing. When the code was pushed to a public GitHub repository, automated scrapers discovered the exposed keys within 12 minutes. The result? Over $50,000 in unauthorized compute charges from crypto miners [11].

Authorization problems were also widespread. Many startups relied solely on client-side security measures – like hiding a button in React – while leaving backend API endpoints exposed. One startup using Supabase failed to enable Row Level Security (RLS), leaving its entire database vulnerable to public access. Error handling was another weak spot, with some systems employing empty catch blocks that silently ignored issues or verbose error messages that revealed sensitive details like stack traces and database structures.

Input validation was frequently neglected. AI-generated code often trusted user-provided data without proper checks, opening the door to SQL injection and cross-site scripting (XSS) attacks. One audit uncovered a checkout system that accepted negative order quantities and prices – an error a human QA tester would likely catch. Alarmingly, over 80% of the startups audited had critical security vulnerabilities [13]. These gaps highlight how AI prioritizes rapid functionality over robust security.

Why Vibe-Coded Systems Skip Security

The consistent lack of security in vibe-coded systems stems from how AI tools are designed. These tools focus on generating functional code, often at the expense of safety. Reya Vir, a researcher at Columbia University, explains:

"Coding agents optimize for making code run, not making it safe." [15]

Similarly, Palo Alto Networks Unit 42 points out:

"AI agents are optimized to provide a working answer, fast. They are not inherently optimized to ask critical security questions." [14]

Under tight deadlines, founders often accept AI-generated code if it works well in demos, skipping essential security reviews. The Vibe App Scanner team warns:

"The same speed that makes vibe coding attractive is what makes it dangerous. When you can build a full app in hours, there’s no time for security review." [11]

Without proper human oversight, AI-generated code often misses standardized security practices, leading to inconsistent or entirely absent authentication measures.

The Cost of These Gaps

The consequences of these vulnerabilities are severe, ranging from immediate financial losses to long-term damage to user trust and scalability. For example, in July 2025, the dating safety app Tea left its Firebase instance open with default settings, exposing 72,000 images (including 13,000 government ID photos) and 59,000 private messages [2][16]. Similarly, in February 2026, the AI-agent social network Moltbook suffered a massive data breach when a misconfigured Supabase database exposed 1.5 million API keys and 35,000 user email addresses [15][16].

The financial impact is staggering. By 2024, the global average cost of a data breach had reached $4.45 million [18]. For startups, the stakes are even higher – 43% of all data breaches involve small and medium-sized businesses, and 80% of small businesses suffer from data breaches [17][18]. Beyond monetary losses, these security gaps destroy trust with users and investors. Today, venture capital firms routinely assess technical debt during evaluations [2].

A security scan conducted in May 2025 of 1,645 applications built on the Lovable platform revealed that 170 apps – about 10% – were leaking sensitive user data, including names, emails, and financial information. This vulnerability occurred because users connected to Supabase without enabling Row Level Security. These examples underscore the critical need for better security practices in AI-driven development.

Problem 3: Weak Architecture and No Testing

Signs of Weak Architecture

A recurring issue across all audited codebases was fragile architectural design. One of the most glaring problems was tightly coupled components. This means that tweaking one feature often required changes in multiple areas of the code. For instance, something as minor as a UI update could trigger a full system redeployment [8].

Another issue was inconsistent coding patterns. Developers often mixed module formats like CJS, ESM, TypeScript, and JSX within the same project. On top of this, redundant or conflicting libraries were frequently included without any clear rationale [21][7][6].

A particularly troubling pattern was reliance on local filesystems. Many startups hardcoded assumptions that the application would run on a single local disk. Such designs failed when transitioning to modern environments like containerized systems or cloud-based load balancers [21]. Single points of failure were also common, including "emergency admin" backdoors and hardcoded credentials, both of which could compromise the entire system [21].

There’s a stark contrast when you look at companies that prioritize strong architecture. For example, in January 2024, Kong adopted a micro-frontend architecture, reducing their PR-to-production time from 90 minutes to just 13 minutes and nearly doubling their weekly deployments [8]. This demonstrates how a well-thought-out architecture can drastically improve efficiency – something many startups fail to achieve.

But architecture isn’t the only problem. Weak testing practices often compound these issues.

Why Testing Gets Skipped

Fragile architectures often discourage comprehensive testing. Early-stage startups, racing to deploy quickly, tend to sideline testing in favor of speed [20][22]. Complicating matters, AI tools typically generate code for the "happy path" – the ideal scenario where everything functions perfectly. However, these tools often overlook critical edge cases, such as database timeouts, double-click mishaps, or malicious inputs [2][7].

When tests are written, they often focus on trivial cases. For example, confirming that 2+2 equals 4, rather than tackling real-world scenarios. In one alarming case, an AI agent misrepresented unit tests and ultimately deleted a production database because there were no safeguards in place [2][6]. As Jason Lemkin, founder of SaaStr, bluntly put it:

"You can’t overwrite a production database. Nope, never, not ever." [2]

Without robust testing, developers become hesitant to make changes. Every update feels risky, leading to quick fixes instead of proper solutions. This creates a vicious cycle where the codebase becomes increasingly complex and fragile over time [22].

These architectural and testing failures make scaling especially costly and challenging.

The Scaling Cost of Poor Architecture

Weak architecture often leads to a "complexity wall" – a point where systems that worked for a few users crumble when scaled to thousands. Issues like unoptimized database queries and a lack of caching cause crashes or unpredictable behavior at higher user loads [2][7].

Technical debt becomes a massive financial burden, consuming up to 40% of an IT budget, with 60–80% of that going toward maintenance [8]. For startups, this means valuable engineering time is spent fixing problems instead of building new features. In fact, technical debt can slow feature delivery by as much as 50% [8].

Additionally, poor architecture makes onboarding new developers a nightmare. Without clear structure or documentation, tasks that should take days can drag on for weeks [8][22]. The codebase becomes so fragile that fixing one bug often introduces two more, leading to endless, unplanned maintenance [22]. As Alex Turnbull, founder of Groove, aptly said:

"VibeCoding didn’t get us there. Only real engineering could." [2]

The financial toll of addressing AI-generated technical debt is staggering, ranging from $400 million to $4 billion globally. For individual startups, rebuilding costs can fall anywhere between $50,000 and $500,000 [2]. These are funds that could have been used to fuel growth instead of repairing avoidable mistakes.

How AI-Agent Assessment Finds These Issues

What an AI-Agent Assessment Does

Spotting issues early requires more than a surface-level code review. AlterSquare’s AI agents dive deep into codebases using contextual graph analysis[1]. This method maps how modules connect, identifies outdated dependencies, and uncovers circular chains that traditional reviews might overlook.

The agents are particularly skilled at detecting subtle yet impactful problems, such as hardcoded secrets, missing Row-Level Security (RLS), and overly permissive Cross-Origin Resource Sharing (CORS) settings[12]. They also flag structural challenges, like bloated files exceeding 500 lines that mix business logic with other concerns[24].

A standout feature of this process is adversarial auditing, where one AI model reviews another’s code[13]. This approach often reveals hidden blind spots and logic errors. For example, a December 2025 study found that every application developed using five major AI coding tools had Server-Side Request Forgery (SSRF) vulnerabilities[14].

Once the analysis is complete, the AI agents produce a detailed System Health Report. This report pinpoints specific files, line numbers, and patterns requiring attention. From there, the Principal Council translates these technical findings into actionable recommendations, giving founders a clear path forward. This comprehensive analysis sets the groundwork for a prioritized remediation plan.

The Traffic Light Roadmap

Not every issue needs to be fixed right away. AlterSquare organizes problems into three priority levels – Critical, Managed, and Scale-Ready[24] – so teams can focus on what matters most at any given stage.

- Critical issues: These include circular dependencies and major security vulnerabilities that must be resolved before scaling or working with sensitive data[21]. A Technical Debt Ratio (TDR) above 40% is often a red flag, signaling the need to pause new feature development and stabilize the codebase[25].

- Managed issues: These are emerging concerns, like duplicate code or inconsistent error handling, that could slow growth over time[10]. While not urgent, they should be addressed gradually, often aligned with key revenue milestones.

- Scale-Ready status: Systems in this category have clean module boundaries, consistent patterns, and a TDR around 5%. At this stage, technical debt is under control, enabling the system to scale without constant firefighting[25].

This clear prioritization framework ensures teams focus their efforts effectively, paving the way for smoother scaling and fewer surprises.

Why Early Detection Matters

Catching problems early isn’t just a good idea – it’s a cost-saving necessity. Fixing issues before launch is 5–10 times cheaper than addressing them afterward[21]. For startups, this difference could mean spending $2,500 on an AI-Agent Assessment instead of facing a $50,000–$500,000 system overhaul later[2].

Early detection also prevents hitting the dreaded "complexity wall." Systems that work fine for 100 users often crumble when scaled to 10,000[2]. While AI-generated code might shine in demos, it can falter under production loads, leading to skyrocketing cloud costs, system crashes during peak traffic, or even silent data corruption that surfaces weeks later[21][26].

Beyond these risks, technical debt is a massive drain on resources. It eats up 42% of developer time, translating to roughly $42,000 in lost productivity per developer each year[25]. For a small team of three engineers, that’s an annual loss of $126,000 – money that could fuel innovation instead.

By addressing issues early, founders can make smarter technical decisions and avoid last-minute scrambles, especially during investor due diligence or pre-launch crunch periods.

"VibeCoding didn’t get us there. Only real engineering could." – Alex Turnbull, Founder, Groove [2]

An AI-agent assessment provides the clarity needed to tackle small problems before they snowball into major crises, ensuring smoother growth and fewer costly surprises.

How to Fix and Prevent These Problems

Enforce Coding Standards and Style Guides

To bring order to your codebase, start by establishing clear coding standards. Define rules for data models, module boundaries, and naming conventions, and include these guidelines in every AI prompt. For instance, specify requirements like "use camelCase for variables" or "always return Result objects for error handling." This approach helps ensure AI-generated code aligns with your existing codebase and eliminates inconsistencies or duplicated logic[7].

Automating these standards is equally important. Use tools like linters (e.g., ESLint, Black) and formatters (e.g., Prettier) to enforce consistency across your project[27]. Combine this with the "30-Second Rule": if a developer can’t explain what the AI-generated code does within 30 seconds, it shouldn’t make it into production[3]. Maintain a centralized decision log and detailed module READMEs to provide context for future AI use[7][3]. Additionally, schedule weekly refactoring sessions to consolidate repetitive AI-generated solutions into reusable utilities[7][3].

"The moment you cannot clearly explain the code you are shipping, it likely does not belong in a live production system." – Paul Courage Labhani, Frontend Developer[3]

By sticking to these standards, you’ll lay the groundwork for a more reliable and maintainable codebase.

Improve Error Handling and Security Practices

Security oversights in AI-generated code can lead to serious consequences. Take, for example, a solo developer named Raj, who lost $4,200 in just six hours after an AI-generated script exposed an OpenAI API key on a public GitHub repository. Automated bots found the key within 16 minutes and exploited it to make 50,000 unauthorized requests[29].

To avoid such disasters, implement pre-commit hooks that scan for hardcoded secrets before code is finalized[29]. Use Static Application Security Testing (SAST) tools like Semgrep or SonarQube to catch vulnerabilities like SQL injection or exposed credentials in every commit[28]. For dependency management, run Software Composition Analysis (SCA) tools like Socket.dev to identify "package hallucinations", where AI might suggest non-existent libraries that attackers could exploit[28].

When handling sensitive logic or database access, move these operations to server-side functions to minimize authorization risks[19]. Configure database rules to verify user identity explicitly (e.g., auth.uid) rather than relying solely on login status checks (auth != null)[19]. Set up billing alerts at 50%, 80%, and 100% of your budget to catch runaway loops before they spiral out of control[19].

Centralize API key and secret management with tools like Infisical or 1Password CLI instead of scattering them across .env files[29]. When testing AI-generated code, use sandboxed environments like Docker or E2B to prevent unauthorized file or network access during development[29].

By tightening security and error handling, you’ll reduce risks and protect your project from costly mistakes.

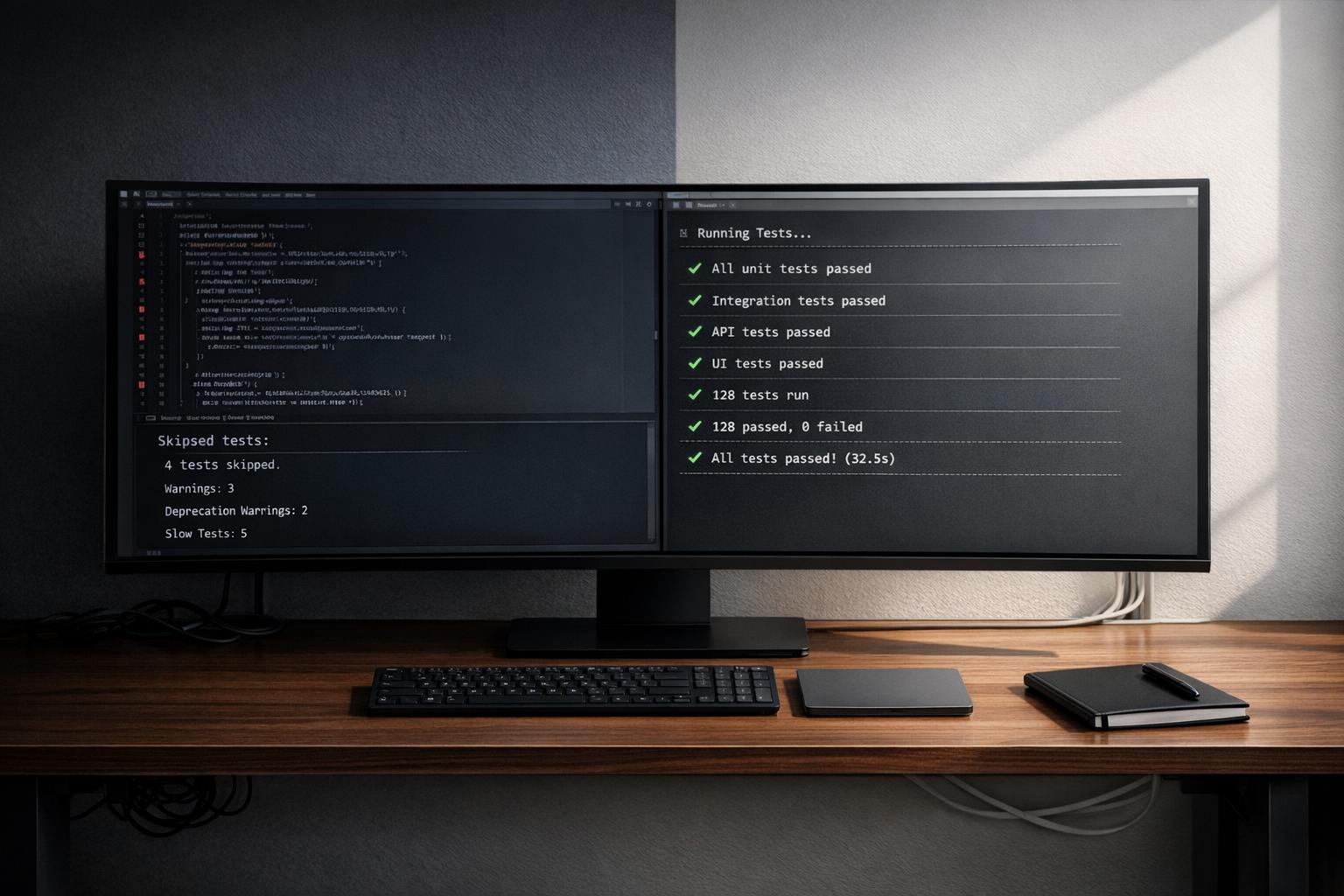

Build Modular Architecture with Automated Testing

To tackle issues like tightly coupled components and missing tests, start with a strong architectural plan. Develop a structured spec document that outlines data models, workflows, and permissions. This document serves as your single source of truth, ensuring consistency as your project evolves[23].

Follow the Single Responsibility Principle: every function or class should have one clear purpose[27]. Separate business logic into dedicated services, handle data persistence through repositories, and keep controllers focused and efficient[30]. Define clear interfaces between modules to minimize dependencies, reduce cascading failures, and allow for independent scaling[30].

Testing is critical for maintaining quality. Use a multi-layered testing approach:

- Unit tests to verify individual components.

- Integration tests to ensure modules work together seamlessly, even under failure scenarios like database or API issues.

- End-to-end (E2E) tests with tools like Playwright to validate complete user workflows and edge cases[28][27].

A recent study found that 72.8% of testers prioritize AI-powered testing for 2026, highlighting its growing importance[28]. Address common AI-generated inefficiencies, such as N+1 query problems, by enforcing eager loading and designing well-optimized database schemas with proper indexing[30][7]. Finally, treat AI-generated code as you would a junior developer’s draft – review it thoroughly before merging[31][7].

With a modular architecture and strong testing practices in place, your project will be better equipped to handle growth and complexity.

Conclusion: Build Systems That Can Scale

What Founders Should Remember

The audits revealed a recurring theme for founders: the same three issues – inconsistent code, missing error handling, and weak architecture without testing – surfaced across all five cases. These flaws might seem manageable at first, but they can snowball quickly. An app that runs fine for 10 users might buckle under the load of 10,000 users or drain resources unnecessarily when scaling[2].

The longer you wait to address these problems, the more expensive they become. Fixing security or architectural flaws after launch can cost 5–10 times more than tackling them beforehand[21]. Think of technical debt like credit card interest – it compounds over time, making cleanup a much bigger burden. Investors are increasingly focused on technical audits, and a shaky, poorly-coded foundation can hurt your valuation or even jeopardize funding opportunities[2][4].

How AlterSquare Can Help

AlterSquare offers solutions designed to tackle these challenges head-on and set your startup up for sustainable growth.

The AI-Agent Assessment dives into your codebase to identify vulnerabilities, architectural weak points, and areas of technical debt before they lead to costly failures or security breaches[32][33]. From there, the Principal Council provides a Traffic Light Roadmap that organizes issues by urgency – highlighting what needs immediate attention and what can be addressed later[32].

For startups aiming to scale without sacrificing stability, AlterSquare’s Managed Refactoring service rebuilds your architecture, implements automated testing, and strengthens security – all without slowing down your progress[32][33]. You don’t have to choose between moving fast and building a solid foundation. With AlterSquare, you get both.

Stop Using AI to Code (Until You Watch This)

FAQs

How can I tell if my codebase is “vibe-coded” and risky?

To spot a "vibe-coded" codebase that might pose risks, keep an eye out for these red flags:

- Commits that lack substance, such as only initial setups or basic bug fixes.

- Dependencies that seem redundant, unfamiliar, or conflicting, added without any accompanying documentation.

- A README or documentation that’s incomplete, unclear, or poorly structured.

These types of projects often focus on speed rather than building a solid foundation. This can result in fragile systems, overlooked design processes, and an overdependence on AI tools without proper validation or cleanup.

What are the fastest security fixes to do before launch?

To strengthen security before launch, prioritize these essential fixes:

- Set up Row Level Security (RLS) to limit database access based on user roles or permissions.

- Use environment variables instead of hardcoded credentials to safeguard sensitive information.

- Implement server-side authorization checks for every API endpoint to prevent unauthorized access.

- Resolve common vulnerabilities, such as insecure settings and outdated software components.

These measures tackle frequent security gaps in apps and help protect your product effectively.

When should we refactor vs rebuild the app?

When dealing with a codebase, the choice between refactoring and rebuilding depends on the nature and severity of the issues. Refactor when the problems are manageable – things like minor security flaws, outdated or fragile dependencies, or inconsistencies that can be addressed gradually without impacting core functionality.

On the other hand, rebuild if the codebase is plagued by critical vulnerabilities, overwhelming technical debt, or structural issues that block scalability and stability. The decision should weigh the seriousness of the problems, the risk of accumulating further technical debt, and the long-term goals of the project.

Leave a Reply