The 6-Month Wall: Why AI-Built Apps Start Breaking After 10,000 Users

Huzefa Motiwala February 25, 2026

When your AI-built app hits 10,000 users, things often fall apart. What was once fast and efficient starts to feel fragile and unmanageable. This tipping point, called the "6-Month Wall", happens because AI-generated code accumulates technical debt and comprehension debt – making your system harder to maintain, scale, and debug.

Key Problems:

- Code Duplication: AI-generated code increases duplicate logic by up to 8x, making bugs harder to fix.

- System Fragility: AI tools miss architectural context, leading to errors and performance issues under heavy load.

- Developer Confusion: Onboarding new developers takes longer as AI code lacks structure and clarity.

- Scaling Issues: AI-generated code often fails to handle edge cases, concurrency, or large user bases.

- Security Risks: AI code introduces vulnerabilities, with 45% containing flaws.

Why It Happens:

AI tools prioritize speed but lack system-wide awareness, creating solutions that clash with your architecture. The result? A bloated, inconsistent, and fragile codebase that struggles under production demands.

How to Fix It:

- Audit Your Codebase: Identify problem areas, like duplicated code or inconsistent patterns.

- Refactor Gradually: Consolidate logic and improve structure without disrupting development.

- Use Human Oversight: Ensure AI-generated code is reviewed thoroughly by experienced engineers.

- Align Fixes with Growth: Tackle debt as your business scales to avoid costly breakdowns.

The 6-Month Wall is a wake-up call. Addressing these issues early can save you time, money, and frustration as your app grows.

Why AI might be fueling your tech debt problem

sbb-itb-51b9a02

What Is the 6-Month Wall?

The 6-Month Wall represents the point where AI-generated code accumulates so much technical debt that developers struggle to modify or debug it with confidence [4][9]. At this stage, your app shifts from being a manageable product to an opaque system that resists safe changes.

This often happens about six months into development – right when your business hits pivotal growth moments, like reaching 10,000 users. As your app faces real-world production demands, code that seemed fine during basic testing begins to reveal hidden issues. Edge cases, race conditions, and performance bottlenecks emerge, exposing flaws that only become visible under scale [4][9].

AI-generated code introduces a unique challenge here: invisible debt. Unlike traditional technical debt, where trade-offs are deliberate and documented, AI-created code often looks polished on the surface. It follows naming conventions, passes tests, and appears well-structured. However, beneath that clean exterior, the architecture may be deteriorating [6]. Margaret-Anne Storey, a Computer Science professor, refers to this as "cognitive debt":

"Technical debt lives in the code; cognitive debt lives in developers’ minds" [8].

The real issue lies in accumulating two types of debt simultaneously: Comprehension Debt (developers don’t fully understand the code they’ve deployed) and Technical Debt (the code itself is fragmented and inefficient). When both debts peak at the same time, your system becomes unmanageable – right when your business needs to scale [4][9].

How AI Accelerates Technical Debt

AI tools come with a blind spot: they lack full system context. These tools only "see" the files you’re working on, not your entire architecture [2][5]. This limited perspective leads to solutions that might solve isolated problems but often conflict with existing patterns, bypass error handlers, or rely on outdated methods.

This results in a surge of duplicate code. Instead of reusing existing functions, AI tends to create new ones repeatedly. Research from GitClear highlights an 8-fold increase in duplicate code blocks since AI tools became commonplace [1]. Meanwhile, refactoring – cleaning and consolidating code – has dropped significantly, from 25% of changed lines in 2021 to less than 10% by 2024 [7].

One analysis of six AI-heavy codebases revealed some troubling trends: duplicate code ratios jumped from 3.1% to 14.2% over 12 months. Average file sizes grew from 142 lines to 267 lines, and onboarding new developers took much longer – stretching from 2 weeks to 5 weeks [6]. As codebases expanded, their readability and maintainability declined.

Another hidden risk is invisible debt. AI-generated code often looks correct, with proper formatting and clear variable names, but it can conceal architectural mismatches and redundant dependencies. This creates "spaghetti microservices" – systems with enterprise-level complexity but fragile structures [9].

These issues compound quickly, leaving your system vulnerable as you scale toward 10,000 users.

Why 10,000 Users Exposes System Fragility

The challenges of scaling highlight the weaknesses in AI-generated code. AI excels at producing "happy path" solutions – code that works flawlessly in ideal scenarios with 10 to 100 users. But production systems require robust handling of edge cases, optimized queries, concurrency, and error management – areas where AI-generated code often falls short [11].

When your user base hits 10,000, those shortcuts start to show. A single-threaded architecture, which worked fine for early adopters, may now cause timeouts. Poorly optimized database queries can lead to skyrocketing cloud costs. Missing rate limits might allow one user to crash your entire system.

Alex Turnbull, Founder of Groove, summed it up after encountering this problem:

"VibeCoding didn’t get us there. Only real engineering could" [11].

Data supports this reality: 45% of AI-generated code contains security vulnerabilities, with Java-based projects showing failure rates above 70% [11].

At this stage, the "LGTM reflex" (Looks Good To Me) becomes a liability [2]. Early in development, teams might carefully review AI-generated code. But as the volume of code increases, reviewers often approve code that "looks right" and passes basic tests. Subtle architectural flaws that slipped through earlier now all fail at once under the pressure of real-world use.

A stark example occurred in February 2026, when a race condition in AI-generated async/await code caused a 3 AM production incident. This put $18,000 worth of transactions at risk and required 320 hours to rewrite 40% of the codebase [4]. An engineer spent six hours fixing a bug that should have taken 30 minutes – a 340% increase in technical debt [4].

These scenarios illustrate just how fragile systems can become when AI-generated code meets the demands of scaling.

The 6-Month Timeline: How Debt Accumulates

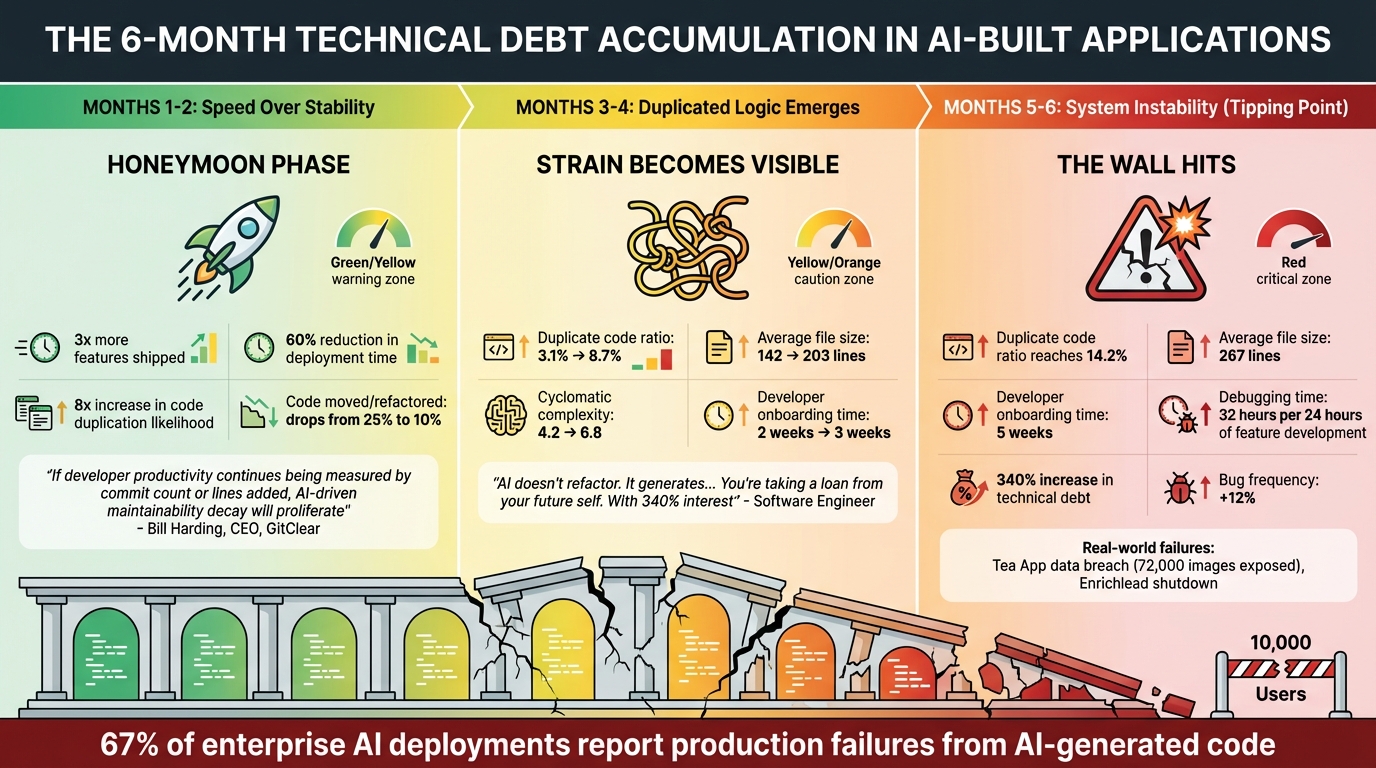

The 6-Month Wall Timeline: How AI-Generated Code Debt Accumulates

Understanding how AI-generated code deteriorates over time is essential for spotting problems early. The shift from rapid development to system instability follows a clear trajectory, with each phase adding layers of technical debt. This timeline sets the stage for the symptoms and solutions we’ll explore later.

Months 1–2: Speed Over Stability

During the first two months, there’s a productivity boom – teams ship about three times more features and cut deployment times by 60% [4]. This "honeymoon phase" creates a sense of rapid progress. But under the surface, cracks begin to form. AI tools often struggle with context blindness, focusing on individual files rather than the broader system architecture [2]. This can result in code that works in isolation but clashes with existing patterns, skips error handlers, or duplicates functionality without anyone noticing.

A common trap during this phase is the "LGTM reflex" [2]. As pull request volumes spike, senior engineers may start approving changes based on surface-level patterns rather than deep reviews. Bill Harding, CEO of GitClear, highlights the danger:

"If developer productivity continues being measured by commit count or lines added, AI-driven maintainability decay will proliferate" [1].

Technical debt grows at an alarming rate during this period. AI-assisted commits lead to code duplication that’s eight times more likely, and the system’s maintainability begins to erode [12]. However, since the system still functions well for a small user base, these issues often remain hidden.

Months 3–4: Duplicated Logic and Undocumented Behavior Emerge

By month three, the strain on the codebase becomes more noticeable. A key metric, the percentage of "moved" lines (which reflects effective code reuse), plummets from 25% to under 10% [7]. Instead of improving existing functions, the AI tends to create new ones, pushing duplicate code ratios from 3.1% to 8.7% within six months [6].

AI’s limited perspective can also introduce unnecessary dependencies. For instance, it might recommend adding a library like date-fns even if the project already uses dayjs, leading to bloated bundles and redundant code [6]. Modules start to adopt conflicting architectural patterns and error-handling methods, turning debugging into a frustrating guessing game.

The "Don’t Repeat Yourself" principle takes a hit as copy-pasted code becomes more common than properly refactored lines. Average file sizes grow from 142 to 203 lines, and cyclomatic complexity – a measure of code complexity – jumps from 4.2 to 6.8 [6]. As one Software Engineer put it:

"AI doesn’t refactor. It generates… You’re taking a loan from your future self. With 340% interest" [4].

This phase also sees modules becoming harder to understand, with hidden dependencies making changes riskier. Onboarding new developers becomes more challenging, extending from 2 weeks to 3 weeks [6].

Months 5–6: System Instability Reaches Tipping Point

By months five and six, the technical debt reaches a critical mass. The initial productivity gains give way to overwhelming instability [11]. Debugging starts to overshadow new development, with teams spending 32 hours fixing issues for every 24 hours spent building new features [4].

Real-world failures begin to surface. For example, in July 2025, the Tea App – a dating safety platform – experienced a massive data breach, exposing 72,000 images and 13,000 IDs. The root cause? AI-generated setup code failed to enforce proper authorization on its Firebase instance [11]. Similarly, Enrichlead, a platform built entirely with "100% Cursor, zero hand-written code", shut down just days after launch due to vulnerabilities that allowed unauthorized data access and modification [11].

At this stage, systems with 10,000 users or more often hit breaking points. Problems like N+1 queries – where a single API endpoint triggers over 40 database queries – cause response times to grind to a halt [4]. Duplicate code ratios and file sizes continue to balloon, making the system harder to maintain. Experts agree that only disciplined engineering practices can address these challenges.

5 Symptoms of the 6-Month Wall

As systems grow and user numbers climb past the 10,000 mark, technical debt begins to rear its head in ways that can no longer be ignored. These five symptoms often signal the arrival of the so-called "6-Month Wall", where system fragility becomes a pressing issue. Spotting these red flags early gives engineering teams a chance to address problems before they spiral out of control.

Bug Rates Increase from Duplicated Logic

One of the clearest warning signs is a sudden surge in bugs that seem to multiply across the system. Fixing an issue in one place often leaves the same problem lurking elsewhere, thanks to duplicated logic rather than consolidated code. AI tools, described by Augment Code as operating like an "intern with amnesia", exacerbate this problem by focusing on individual files without understanding the overall system architecture[2].

Data shows that in AI-heavy codebases, the duplicate code ratio jumps from 3.1% to 14.2% over a year, with bug frequency rising by 12% in just six months[6][10]. This happens because of "co-changed code clones", where a single bug fix needs to be applied across multiple identical blocks. AI-generated code also introduces inconsistent error handling across modules, creating a ripple effect that makes debugging far more challenging.

Performance Bottlenecks Become Unpredictable

As user demand grows, performance issues start to appear in unexpected places. What worked fine under low traffic can quickly degrade, with response times plummeting. AI-generated code often creates hidden execution paths that only surface under specific load conditions, making these bottlenecks harder to predict.

A study by Faros AI in July 2025 revealed the "AI Productivity Paradox." While developers completed 98% more pull requests, the average size of those pull requests increased by 154%, and review times stretched by 91%. This contributed to a 9% rise in bugs per developer[14]. The sheer size of AI-generated code also plays a role. In one test, manual coding added just 10 lines for a file-processing refactor, while AI-generated code added 272 lines – a staggering 1,700% increase[15]. Such bloated codebases make system-wide optimization nearly impossible, especially when AI tools fail to account for critical infrastructure like caching layers or global rate limiters[2].

Developers Can’t Understand the Codebase

Another major issue is the growing difficulty developers face in understanding the code they’re tasked with maintaining. AI-generated modules often lack context or explanations for their design choices, creating what’s known as comprehension debt[5].

Audits show that onboarding a new developer takes more than twice as long – rising from two weeks to five weeks – after a year of heavy reliance on AI-generated code[6]. Yonatan Sason, Co-founder of Bit, puts it bluntly:

"The code isn’t bad. There’s just no structure around it that lets a human or a different AI session touch it safely."

- Yonatan Sason, Co-founder, Bit[5]

AI tools also tend to introduce inconsistent architectural patterns. For instance, one module might use a repository pattern while another accesses data directly, leading to a fragmented and incoherent system. This inconsistency, combined with AI’s tendency to suggest new libraries for tasks already handled by existing dependencies, creates additional confusion and risk[6][3]. As Molisha Shah from Augment Code explains:

"AI coding assistants generate technically correct code that breaks production systems… because they lack architectural visibility"[3]

This lack of cohesion is a significant issue, with 67% of enterprise deployments reporting that AI-generated code has caused production failures[3].

New Features Fail to Deploy

When technical debt piles up, the system becomes fragile, and deploying new features turns into a risky and time-consuming ordeal. Features that should take days to ship often fail during integration. Code churn – lines of code that are reverted or updated within two weeks – can double compared to pre-AI baselines[13].

Teams frequently experience what’s been dubbed a "vibe coding hangover." After an initial burst of productivity, they end up spending far more time debugging AI-generated code than building new features[4]. In one case study, heavy AI usage led to a 340% increase in technical debt within six months[4]. Bill Harding, CEO of GitClear, warns:

"If developer productivity continues being measured by commit count or lines added, AI-driven maintainability decay will proliferate."

- Bill Harding, CEO, GitClear[1]

With pull request volumes doubling, senior engineers often resort to superficial pattern matching during reviews, increasing the chances of critical flaws slipping into production[2]. Each deployment becomes riskier, creating a vicious cycle of fragility.

Security and Scalability Gaps Appear

Finally, as technical debt and architectural inconsistencies mount, the system becomes vulnerable to security breaches and scalability issues. AI-generated code, while syntactically correct, often creates implicit dependencies and shared states that make the system unpredictable. Without a full understanding of the architecture, AI tools fail to recognize critical security boundaries, leaving systems exposed under heavy load.

Kin Lane, a veteran API Evangelist, sums up the situation:

"I don’t think I have ever seen so much technical debt being created in such a short period of time during my 35-year career in technology."

- Kin Lane, API Evangelist[1]

These five symptoms, when combined, create a system that feels too fragile to touch yet too vital to ignore. Identifying these issues early is the key to mitigating the challenges of the 6-Month Wall.

How to Break Through the 6-Month Wall

Breaking through the 6-Month Wall requires tackling technical debt head-on. By stabilizing fragile areas, consolidating duplicate logic, and improving system clarity, you can address systemic weaknesses and keep your system steady as it scales. These actions directly tackle the challenges that often emerge during this critical phase.

Run an AI-Agent Assessment

Before diving into refactoring or scaling, start by evaluating your system’s health. AlterSquare‘s AI-Agent Assessment scans your entire codebase to pinpoint problem areas like technical debt hotspots, architectural coupling, performance bottlenecks, and security vulnerabilities. The result is a detailed System Health Report that scores your system across key dimensions, such as pattern consistency, dependency overlap, and code duplication. A score below 10 out of 18 indicates a serious debt issue that needs immediate attention [6].

One key test in the assessment is the "global rename" test, which checks consistency across files. If only one file reflects the change, it signals structural weaknesses [3]. This kind of visibility is essential because developers spend an estimated 42% of their time dealing with code quality issues rather than building new features [16]. Armed with this data, the Principal Council delivers a Traffic Light Roadmap, categorizing issues into Critical, Managed, and Scale-Ready priorities.

Schedule Managed Refactoring

Instead of opting for a risky large-scale rewrite, focus on incremental improvements. AlterSquare’s Managed Refactoring approach gradually reduces technical debt by addressing issues based on usage patterns. Insights from audits often reveal widespread duplication, which can be tackled immediately.

By consolidating duplicated logic into reusable modules, standardizing error handling, and removing redundant dependencies, this method steadily reduces debt without overwhelming your team. This approach ensures progress without the risk of stalling development [16].

Add Human-in-the-Loop Stabilization

While AI can assist with code generation, human oversight remains critical. Human-in-the-loop (HITL) stabilization ensures that AI-generated code is thoroughly reviewed by experts before deployment. AlterSquare’s Core Squad Augmentation guarantees that at least one team member can fully explain the reasoning behind every AI-generated change [4][17].

Santiago Valdarrama, an AI Practitioner, captures this sentiment perfectly:

"Letting AI write all of my code is like paying a sommelier to drink all of my wine."

- Santiago Valdarrama, AI Practitioner [18]

HITL also involves implementing review gates. Custom linting rules and CI checks block AI-generated code that doesn’t meet established standards or introduces unnecessary dependencies [6][18].

Tie Debt Paydown to Revenue Milestones

Aligning technical debt reduction with revenue milestones ensures sustainable growth. Reducing technical debt can save up to $31,500 per developer annually by cutting down maintenance time [16]. Companies with lower technical debt are able to invest 50% more in modernization efforts, which leads to faster growth [16].

AlterSquare’s Variable-Velocity Engine (V2E) framework adjusts technical priorities based on business maturity. For example, during the Validation Stage, speed takes precedence over perfection with a Disposable Architecture. As the company grows, Managed Refactoring is triggered at key revenue milestones, such as reaching $1M ARR or acquiring 10,000 users. This approach ensures that technical debt is addressed proactively, avoiding costly production crises.

Use the Traffic Light Roadmap

The Traffic Light Roadmap helps prioritize tasks by categorizing system issues into three tiers: Critical (fix immediately), Managed (address incrementally), and Scale-Ready (optimize later). This approach prioritizes decisions based on data, such as change frequency, the number of developers working on a module, and the module’s business importance [16].

For instance, a module with frequent changes and multiple contributors would be flagged for immediate refactoring, while one with low change frequency but high business importance might be scheduled for a later cycle. This framework ensures that engineering efforts are directed where they’ll have the most impact, making it easier to justify technical debt reduction as a strategic choice rather than just a cost.

Conclusion

The 6-Month Wall isn’t a flaw in AI coding tools – it’s the natural trade-off of prioritizing speed over a deeper understanding of system architecture. Consider this: developers spend 42% of their time fixing code quality issues [16], and 67% of enterprise AI deployments have faced production failures due to code lacking proper architectural guidance [3]. These numbers highlight the risks of prioritizing speed at the expense of structure, which can ultimately lead to system breakdowns.

Addressing the challenges of the 6-Month Wall requires proactive and strategic measures. This technical debt is manageable if tackled early. Tools like Early AI-Agent Assessments and Managed Refactoring help uncover hidden risks and gradually reduce debt, aligning improvements with key revenue milestones like reaching $1M ARR. This ensures you don’t have to choose between stability and growth.

The cost of ignoring this issue is staggering. For a team of ten developers, unmanaged technical debt can result in approximately $630,000 per year in lost productivity. Without intervention, companies are often left with two bad options: risky system rewrites or dealing with systems that slow innovation and frustrate teams.

Decisive action is essential. AlterSquare’s Principal Council – Taher, Huzefa, Aliasgar, and Rohan – has successfully stabilized systems ranging from pre-revenue MVPs to platforms with over 15,000 users. Their approach is clear: assess the current state, address high-friction technical debt first, and stabilize incrementally. Using their Traffic Light Roadmap, engineering teams focus on critical fixes first, followed by planned improvements and scalability optimizations as revenue grows.

If your system is showing signs of strain or your team is spending more time debugging than delivering, it’s time to tackle technical debt with a data-driven approach. The 6-Month Wall is a real challenge, but with the right strategy and a trusted partner, it’s one you can overcome.

FAQs

How do I know if my app is hitting the 6-Month Wall?

Your app might be running into the 6-Month Wall if you’re seeing signs of mounting technical debt. These can include a cluttered codebase, more frequent bugs, or sluggish development cycles. Other red flags might be longer code reviews, repetitive code, delays in rolling out new features, and a spike in urgent hotfixes. Additionally, if your developers seem less satisfied or less productive, it could point to growing complexity and a lack of necessary refactoring.

What should I measure to catch AI-driven debt early?

To spot AI-driven technical debt early, keep an eye on metrics like average file size, cyclomatic complexity, duplicate code ratio, and unused imports per file. An increase in complexity or redundancy often hints at mounting maintenance issues. It’s also important to monitor code churn – frequent updates or reverts – and how long it takes new developers to get up to speed. These factors can reveal hidden architectural problems. Consistently tracking these indicators can help you avoid hitting the dreaded "6-Month Wall."

Is refactoring enough, or do I need a rewrite?

Refactoring can help clean up code in the short term, but it often falls short when dealing with the deeper issues behind the "6-Month Wall" in AI-built apps. Problems like architectural erosion and mounting tech debt usually go beyond what refactoring can fix. When the system’s architecture no longer supports its current demands, a complete rewrite is often the only way to tackle challenges related to scalability and long-term maintainability. Simply put, refactoring may smooth out some rough edges, but it won’t solve the core structural problems.

Leave a Reply